Regulate the Use of AI in Talent Software

Regulate the Use of AI in Talent Software

The Issue

follow on instagram @aeri4fairai

We are not against the use of AI in principle. However, we implore you to read the articles we’ve shared that detail how AI systems are making critical employment decisions based on predictions tied to names, ages, sex, race, and other protected characteristics.

Regulations are urgently needed to enforce transparency and to eliminate learning models that rely on characteristics unrelated to job experience or qualifications.

THE ISSUE

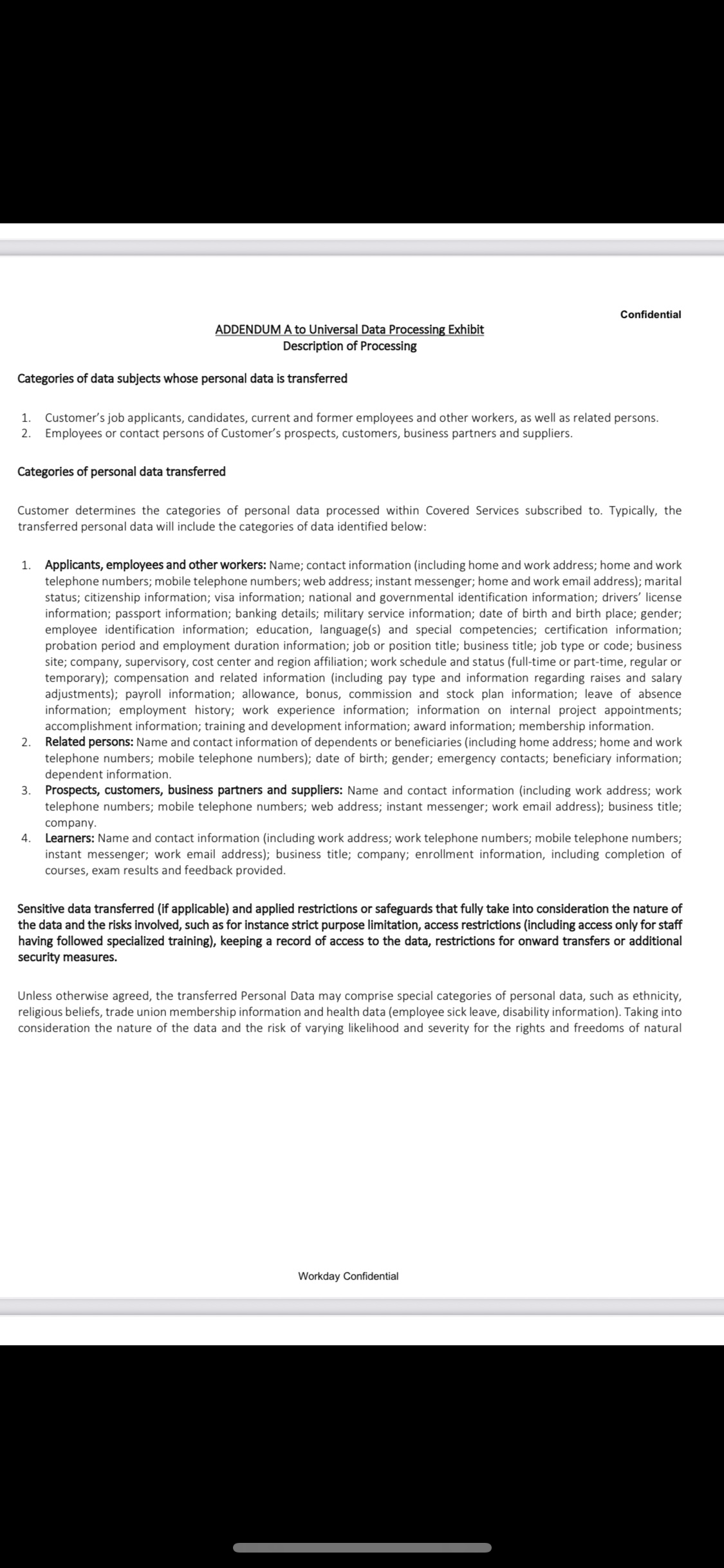

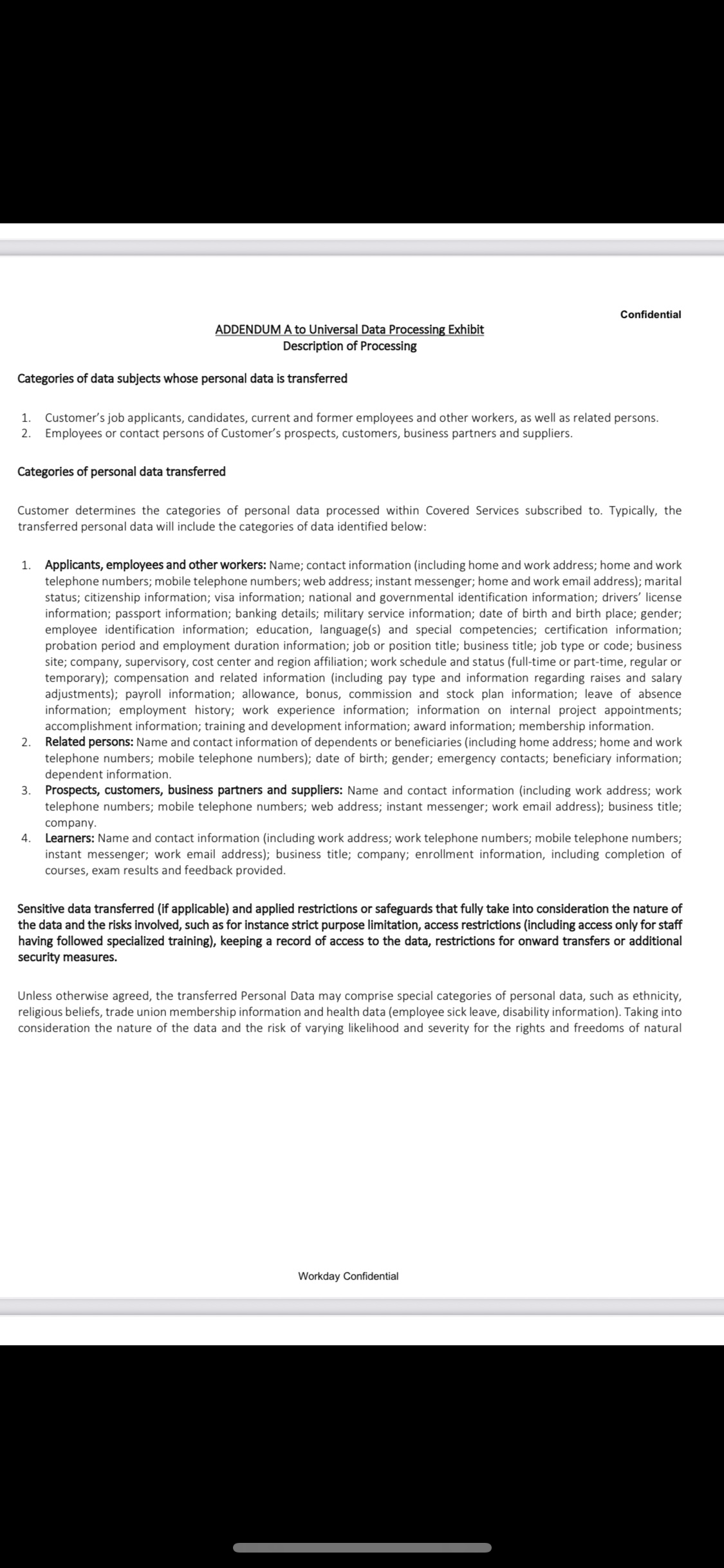

If you’ve ever applied for a job through a company that uses Workday’s applicant tracking system, your personal data may have been harvested and shared—without your knowledge or explicit consent—to fuel AI and machine learning (ML) hiring models

Workday’s own Data Processing Exhibit (included in their Information Sharing Agreements, or “ISAs”) confirms that they collect and transfer

Job applicants, current and former employees, prospects, and even beneficiaries

Sensitive personal data such as ethnicity, union membership, health information, performance history, salary, passport and military records, marital status, and more

Data used to train AI/ML scoring systems that screen, rank, and filter candidates—often before a human ever sees an application

No consent. No transparency. No opt-out.

WHY THIS MATTERS

These AI-driven hiring systems are black boxes—unregulated, unaccountable, and often discriminatory. By secretly building “shadow profiles” of job seekers, Workday and its corporate clients create hiring models that:

Exclude candidates without explanation

Reinforce bias against protected groups

Impact careers by flagging or blocking applicants across multiple employers

Violate privacy rights and may breach laws such as GDPR, FCRA, and Title VII

This is not just a data privacy issue—it’s a human rights and economic justice issue.

OUR DEMANDS

We call on regulators and lawmakers to:

Investigate Workday’s data collection, sharing, and algorithmic hiring practices under U.S. and international privacy and anti-discrimination laws.

Require informed, opt-in consent before any personal data is transferred for AI/ML training.

Ban algorithmic hiring systems that filter applicants before a qualified human review.

Require full transparency into what data is collected, how it’s scored, and how long it’s retained.

Provide a legal right to access and delete all personal data used to train Workday’s models.

SIGN THIS PETITION to protect the rights of job seekers, workers, and their families. We deserve fair hiring, data privacy, and accountability from the systems that decide our futures.

#BlackBoxHiring #AIAccountability #WorkdayISA #DataRights #JobSeekerJustice #BanAlgorithmicBias #StopDataAbuse #FairHiringNow

Your CV Doesn’t Matter—Your Shadow Profile Already Speaks for You

Think your CV is the key to landing a job? Think again. By the time you hit "apply," hiring systems have likely already scored you based on shadow profiles built from third-party data brokers.

Here’s how it works:

Your digital footprint—social media, past applications, online behavior—feeds data vendors.

This data is sold to talent software and enterprise resource planning (ERP) systems.

Integrated hiring tools pre-score you before your CV is even reviewed.

The reality? Your career history, skills, and even “fit” for a role have been algorithmically decided before a recruiter even looks at your name.

So, does your CV still matter? Only as a formality. The real game is happening behind the scenes—without transparency or consent.

AI-driven hiring platforms collect and share data on user behavior, skills, and social network activity to score job candidates, often without transparency.

AI analyzes video interviews, facial expressions, and voice tone for hiring decisions.

AI hiring models lack transparencies, violating GDPR and U.S. employment laws.

AI processes inferred skills using AI models without user control, violating data transparency laws.

Video Interview Facial Recognition (potential BIPA violation).

Voice Pattern & Emotional Analysis (New York biometric law risks).

As someone personally impacted by the unregulated use of AI in hiring software, I’ve experienced the devastating effects these systems can have on people from protected classes. Individuals who are older, part of minority racial groups, or living with disabilities face heightened risks of poverty, declining health, and loss of livelihoods. For many, this leads to a downward spiral into homelessness. This silent suffering cannot continue.

While some states have taken steps to regulate the use of AI in hiring, these fragmented efforts fall tragically short on a national level. Our collective stories reveal a deeply entrenched problem: AI-driven hiring practices are perpetuating systemic discrimination. The efficiency of artificial intelligence cannot be prioritized over equity and justice in employment opportunities.

The widespread adoption of AI screening systems in recruitment, used by over 75% of Fortune 500 companies, results in 83% of resumes being filtered before reaching human review. This technology affects an estimated 85 million job seekers annually through shadow profiles. While AI screening is not limited to high-level roles, entry-level positions increasingly rely on it, raising concerns about demographic biases and historical hiring inequities. By 2025, over 90% of large employers are expected to use AI evaluation[1],[2]. In summary, AI in recruitment poses challenges related to bias and fairness, despite its efficiency.

We are petitioning for comprehensive regulation of AI in hiring practices and calling for an immediate halt to its use until these regulations are firmly in place. Your signature represents more than support; it’s your voice joining ours in a fight for fairness. If you’ve been affected by long-term unemployment, please share your story as well. Together, we can demand change.

Please sign this petition and help us make our voices heard. Together, we can create a future where fairness, not bias, dictates employment opportunities.

[1] (2024) VIRTUALHR: AI-DRIVEN AUTOMATION FOR EFFICIENT AND UNBIASED CANDIDATE RECRUITMENT IN SOFTWARE ENGINEERING ROLES International Research Journal of Modernization in Engineering Technology and Science

[2] Rahman, S., M., Hossain, M., A., Miah, M., S., Alom, M., Islam, M. (2025) Artificial Intelligence (AI) in Revolutionizing Sustainable Recruitment: A Framework for Inclusivity and Efficiency International Research Journal of Multidisciplinary Scope

https://www.npr.org/2022/05/12/1098601458/artificial-intelligence-job-discrimination-disabilities

https://genderpolicyreport.umn.edu/algorithmic-bias-in-job-hiring/

https://www.bbc.com/worklife/article/20240214-ai-recruiting-hiring-software-bias-discrimination

https://www.fisherphillips.com/en/news-insights/ai-resume-screeners.html

741

The Issue

follow on instagram @aeri4fairai

We are not against the use of AI in principle. However, we implore you to read the articles we’ve shared that detail how AI systems are making critical employment decisions based on predictions tied to names, ages, sex, race, and other protected characteristics.

Regulations are urgently needed to enforce transparency and to eliminate learning models that rely on characteristics unrelated to job experience or qualifications.

THE ISSUE

If you’ve ever applied for a job through a company that uses Workday’s applicant tracking system, your personal data may have been harvested and shared—without your knowledge or explicit consent—to fuel AI and machine learning (ML) hiring models

Workday’s own Data Processing Exhibit (included in their Information Sharing Agreements, or “ISAs”) confirms that they collect and transfer

Job applicants, current and former employees, prospects, and even beneficiaries

Sensitive personal data such as ethnicity, union membership, health information, performance history, salary, passport and military records, marital status, and more

Data used to train AI/ML scoring systems that screen, rank, and filter candidates—often before a human ever sees an application

No consent. No transparency. No opt-out.

WHY THIS MATTERS

These AI-driven hiring systems are black boxes—unregulated, unaccountable, and often discriminatory. By secretly building “shadow profiles” of job seekers, Workday and its corporate clients create hiring models that:

Exclude candidates without explanation

Reinforce bias against protected groups

Impact careers by flagging or blocking applicants across multiple employers

Violate privacy rights and may breach laws such as GDPR, FCRA, and Title VII

This is not just a data privacy issue—it’s a human rights and economic justice issue.

OUR DEMANDS

We call on regulators and lawmakers to:

Investigate Workday’s data collection, sharing, and algorithmic hiring practices under U.S. and international privacy and anti-discrimination laws.

Require informed, opt-in consent before any personal data is transferred for AI/ML training.

Ban algorithmic hiring systems that filter applicants before a qualified human review.

Require full transparency into what data is collected, how it’s scored, and how long it’s retained.

Provide a legal right to access and delete all personal data used to train Workday’s models.

SIGN THIS PETITION to protect the rights of job seekers, workers, and their families. We deserve fair hiring, data privacy, and accountability from the systems that decide our futures.

#BlackBoxHiring #AIAccountability #WorkdayISA #DataRights #JobSeekerJustice #BanAlgorithmicBias #StopDataAbuse #FairHiringNow

Your CV Doesn’t Matter—Your Shadow Profile Already Speaks for You

Think your CV is the key to landing a job? Think again. By the time you hit "apply," hiring systems have likely already scored you based on shadow profiles built from third-party data brokers.

Here’s how it works:

Your digital footprint—social media, past applications, online behavior—feeds data vendors.

This data is sold to talent software and enterprise resource planning (ERP) systems.

Integrated hiring tools pre-score you before your CV is even reviewed.

The reality? Your career history, skills, and even “fit” for a role have been algorithmically decided before a recruiter even looks at your name.

So, does your CV still matter? Only as a formality. The real game is happening behind the scenes—without transparency or consent.

AI-driven hiring platforms collect and share data on user behavior, skills, and social network activity to score job candidates, often without transparency.

AI analyzes video interviews, facial expressions, and voice tone for hiring decisions.

AI hiring models lack transparencies, violating GDPR and U.S. employment laws.

AI processes inferred skills using AI models without user control, violating data transparency laws.

Video Interview Facial Recognition (potential BIPA violation).

Voice Pattern & Emotional Analysis (New York biometric law risks).

As someone personally impacted by the unregulated use of AI in hiring software, I’ve experienced the devastating effects these systems can have on people from protected classes. Individuals who are older, part of minority racial groups, or living with disabilities face heightened risks of poverty, declining health, and loss of livelihoods. For many, this leads to a downward spiral into homelessness. This silent suffering cannot continue.

While some states have taken steps to regulate the use of AI in hiring, these fragmented efforts fall tragically short on a national level. Our collective stories reveal a deeply entrenched problem: AI-driven hiring practices are perpetuating systemic discrimination. The efficiency of artificial intelligence cannot be prioritized over equity and justice in employment opportunities.

The widespread adoption of AI screening systems in recruitment, used by over 75% of Fortune 500 companies, results in 83% of resumes being filtered before reaching human review. This technology affects an estimated 85 million job seekers annually through shadow profiles. While AI screening is not limited to high-level roles, entry-level positions increasingly rely on it, raising concerns about demographic biases and historical hiring inequities. By 2025, over 90% of large employers are expected to use AI evaluation[1],[2]. In summary, AI in recruitment poses challenges related to bias and fairness, despite its efficiency.

We are petitioning for comprehensive regulation of AI in hiring practices and calling for an immediate halt to its use until these regulations are firmly in place. Your signature represents more than support; it’s your voice joining ours in a fight for fairness. If you’ve been affected by long-term unemployment, please share your story as well. Together, we can demand change.

Please sign this petition and help us make our voices heard. Together, we can create a future where fairness, not bias, dictates employment opportunities.

[1] (2024) VIRTUALHR: AI-DRIVEN AUTOMATION FOR EFFICIENT AND UNBIASED CANDIDATE RECRUITMENT IN SOFTWARE ENGINEERING ROLES International Research Journal of Modernization in Engineering Technology and Science

[2] Rahman, S., M., Hossain, M., A., Miah, M., S., Alom, M., Islam, M. (2025) Artificial Intelligence (AI) in Revolutionizing Sustainable Recruitment: A Framework for Inclusivity and Efficiency International Research Journal of Multidisciplinary Scope

https://www.npr.org/2022/05/12/1098601458/artificial-intelligence-job-discrimination-disabilities

https://genderpolicyreport.umn.edu/algorithmic-bias-in-job-hiring/

https://www.bbc.com/worklife/article/20240214-ai-recruiting-hiring-software-bias-discrimination

https://www.fisherphillips.com/en/news-insights/ai-resume-screeners.html

741

The Decision Makers

Supporter Voices

Petition Updates

Share this petition

Petition created on December 20, 2024