Artist Rights & Platform Accountability Act

Artist Rights & Platform Accountability Act

The Issue

Independent musicians are being erased from streaming platforms without warning, evidence, or appeal.

I’m Kenan Ali Erkan — an independent artist, producer, and audio engineer from Rochester, NY, releasing music under the name Ali Prod®.

In 2023, a single fraud accusation from Spotify — with no evidence and no chance to respond — resulted in my entire catalog being removed across all platforms.

No warning.

No appeal.

No royalties.

Years of work erased overnight.

And I’m not alone.

Behind the scenes, artists are being flagged, removed, and silenced by automated systems with no transparency and no real oversight. Meanwhile, the same ecosystem continues to profit from bot traffic, fraudulent playlists, and artificial engagement.

That’s why I created the Artist Rights & Platform Accountability Act.

We’ve now reached 1,000+ supporters, and this is just the beginning.

I am fully committed to pushing this forward — not just as an idea, but as a real legislative solution to protect independent artists.

If you believe in this, keep sharing, commenting, and spreading awareness.

We’re building something that matters.

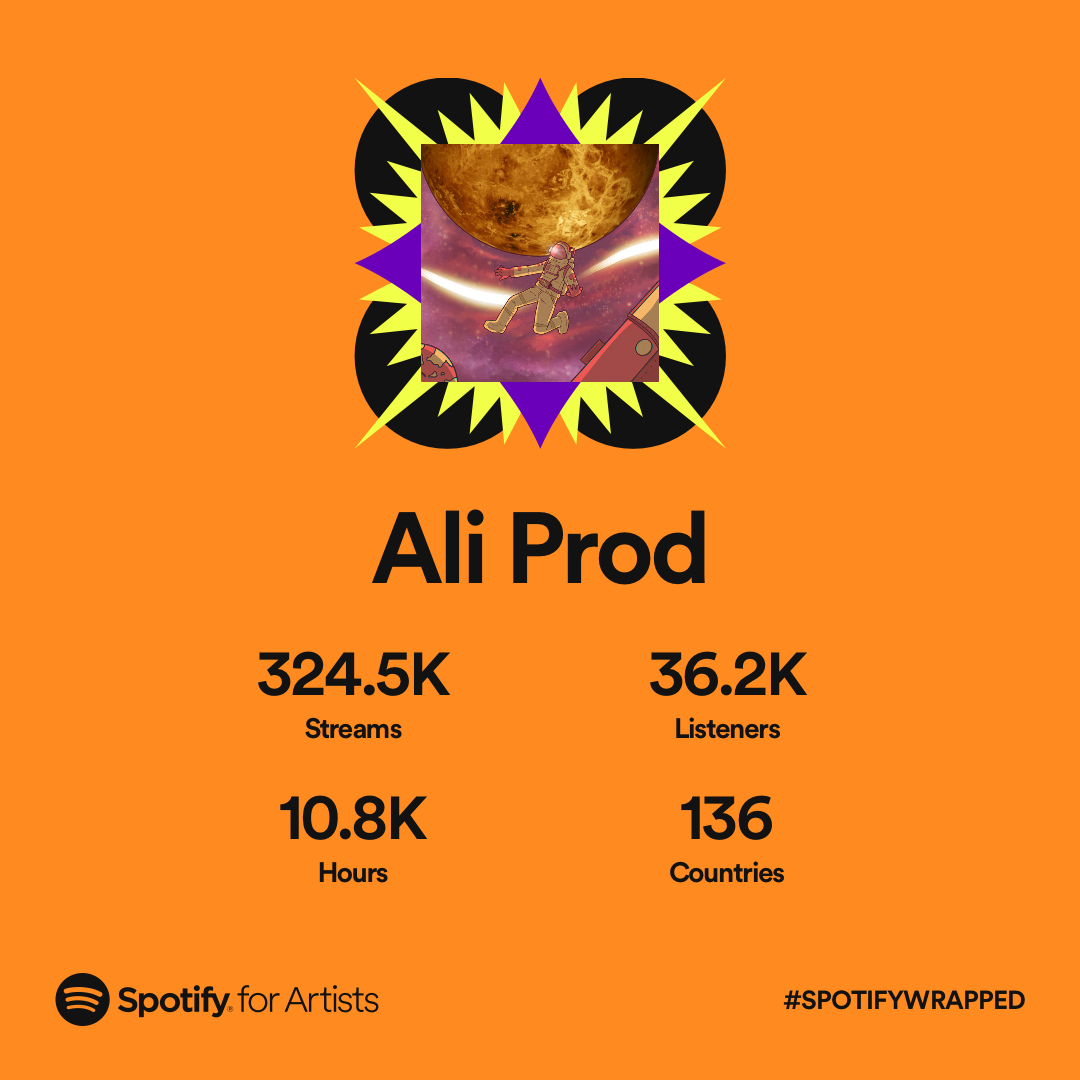

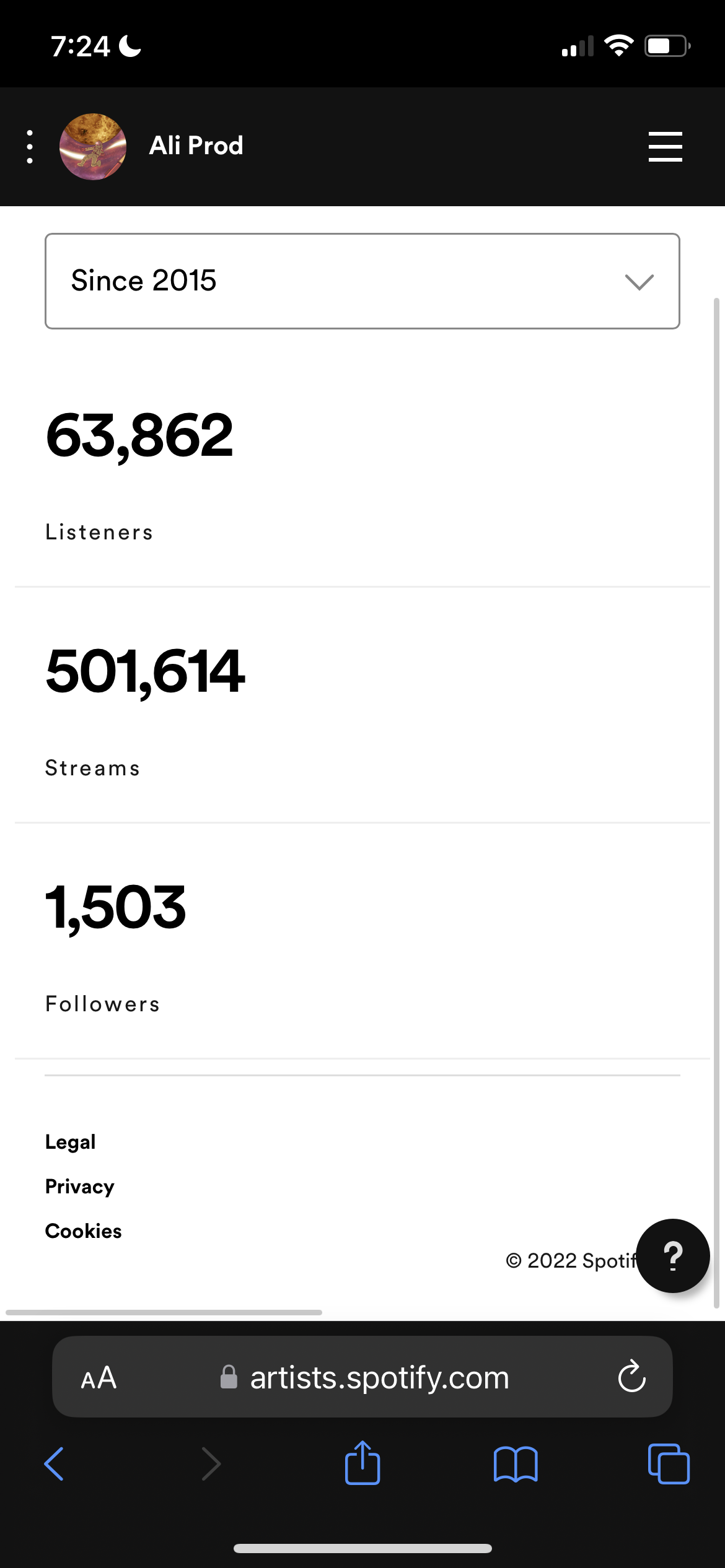

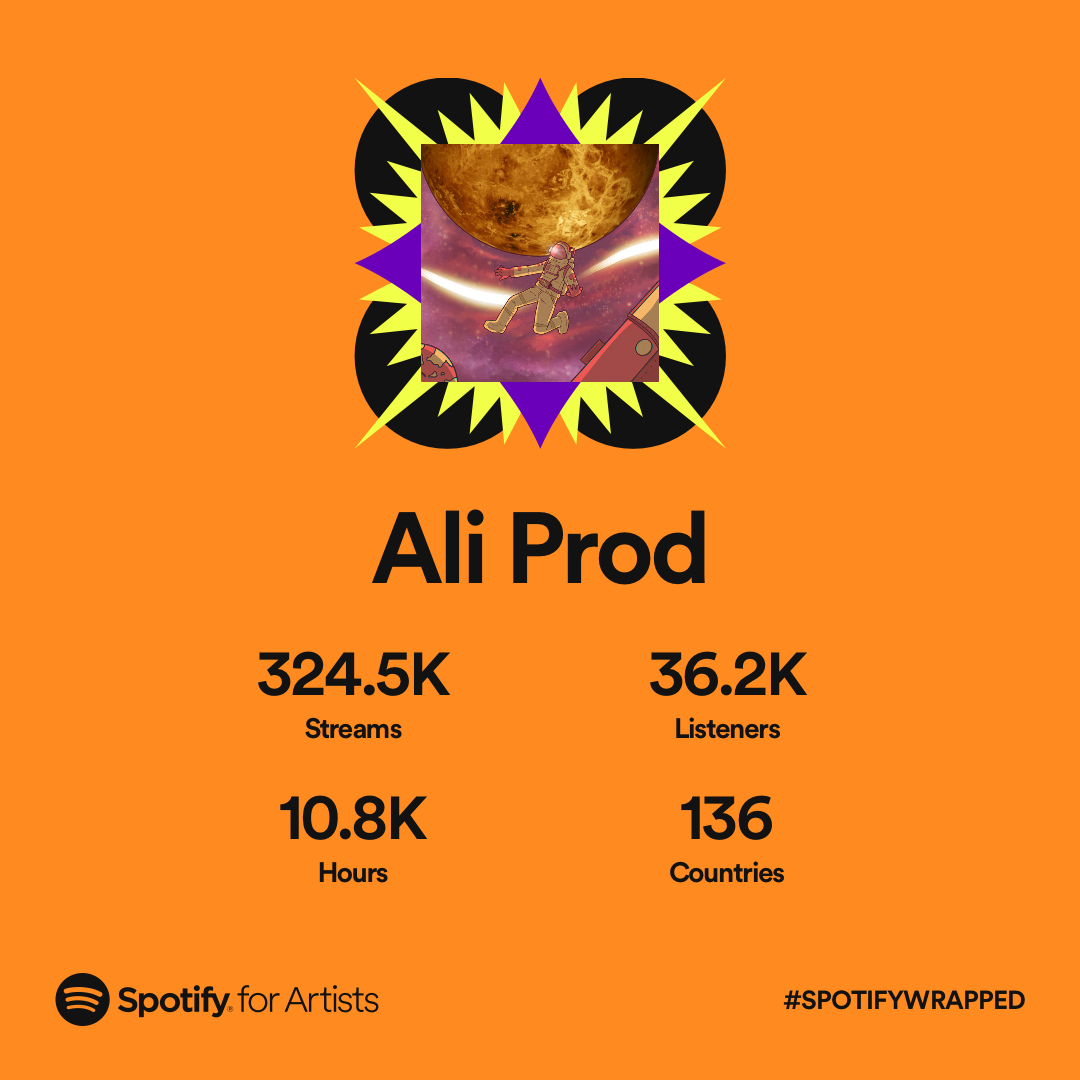

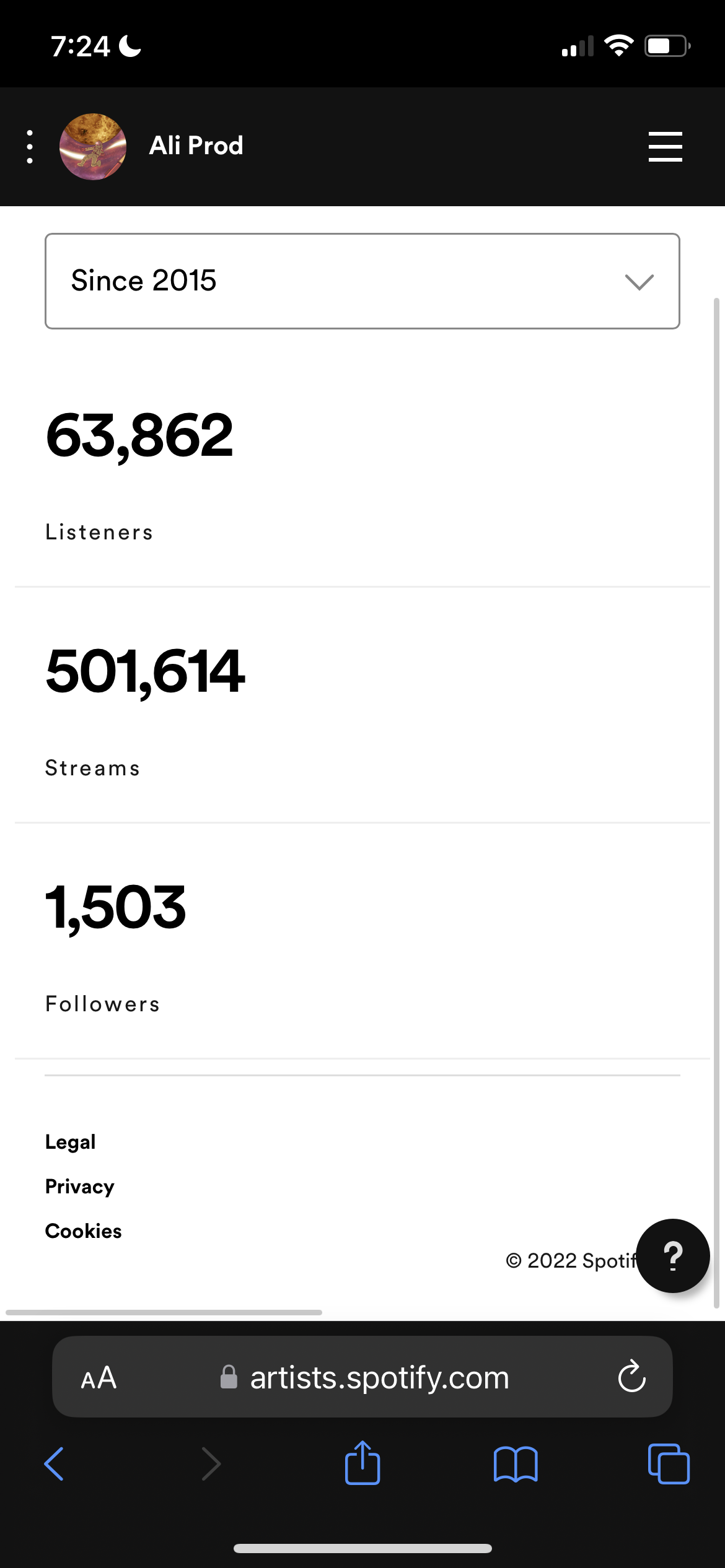

Before being removed without warning or appeal, my work had already reached a global audience — generating hundreds of thousands of streams, tens of thousands of listeners, and engagement across 100+ countries. This level of engagement was built over time through consistent listener activity, independent social media networking, word-of-mouth growth, and my involvement in online communities — including the lo-fi, vaporwave, indie electronic, and beat-making scenes.

The Artist Rights & Platform Accountability Act is a proposed federal legislative framework designed to address widespread abuses affecting independent musicians on digital streaming platforms (DSPs) and distribution services.

Drafted by independent artist Kenan Ali Erkan (Ali Prod®) in response to wrongful catalog removals and unverified fraud accusations, the act seeks to establish enforceable standards of due process, transparency, and accountability across the digital music ecosystem. Its goal is to create a more equitable system that treats music as labor and protects the creators who power the industry.

The act focuses on four primary pillars:

Accountability, Royalty Oversight, Economic Protection, and Artist Empowerment.

Core Provisions:

• Clean Streaming Ecosystem Requirement:

Platforms and distributors must maintain a verifiably clean digital streaming environment, including the active detection and removal of fraudulent playlists, bot networks, and artificial traffic sources.

Artists cannot be penalized for automated or artificial streams outside of their control, and platforms are prohibited from shifting responsibility for systemic failures onto creators without clear, verifiable evidence of direct involvement.

[Section 3.3, Section 4.1]

• Due Process Before Removal:

Platforms (DSPs) and distributors are prohibited from removing music or terminating accounts without prior written notice, specific documented evidence of alleged fraud, and a meaningful opportunity for the artist to respond and appeal.

[Section 2.1]

• Establishment of NOMES (National Organization for Music Economic Safety):

The act proposes the creation of an independent oversight body responsible for monitoring fraud investigations, auditing platforms and distributors, and administering a formal appeals process for artists. NOMES operates in coordination with existing regulatory frameworks while maintaining independent authority.

[Section 5.1]

• Innocent Until Proven Fraudulent:

The burden of proof lies with platforms and distributors—not the artist. Any enforcement action must be supported by independently verifiable evidence of intentional manipulation or fraud. Automated suspicion alone is not sufficient grounds for removal or penalty.

[Section 2.5]

• Royalty Protection & Transparency:

Platforms must provide clear, itemized royalty reports and are prohibited from withholding or seizing royalties without documented cause. In cases of wrongful enforcement, artists are entitled to full recovery of withheld earnings and associated damages.

[Section 3.1]

• Metadata Protection:

The act safeguards against unauthorized metadata tampering, ensuring that artist credits, ownership, and attribution cannot be altered without consent. Platforms must provide mechanisms to restore and verify accurate records.

[Section 9.1]

• Cultural Context Clause:

The act formally recognizes that organic listener behavior—such as repeated listening or “looping”—is a legitimate form of engagement. High stream counts alone cannot be used as evidence of fraud unless automated manipulation is clearly proven.

[Section 1.3]

Key Definitions:

• Locked-Out Artist:

An artist denied access to their DSP or distributor accounts without a proper appeals process or documented justification.

[Section 1 — Definitions]

• Fraudulent Playlist:

Playlists operated by bad actors (including bot-driven or pay-for-play systems) used to artificially inflate streams, which platforms are required to actively detect and remove.

[Section 8.2]

• Shadowbanning:

A form of hidden suppression where music remains technically available but is deliberately excluded from search results, recommendations, or algorithmic visibility. This practice is prohibited under the act.

[Section 8.4]

Read the full legislation:

AliProd.Net

https://aliprod.net/artist-rights-act

Contact:

AliProd.Net@gmail.com

Documented Evidence:

Evidence Summary

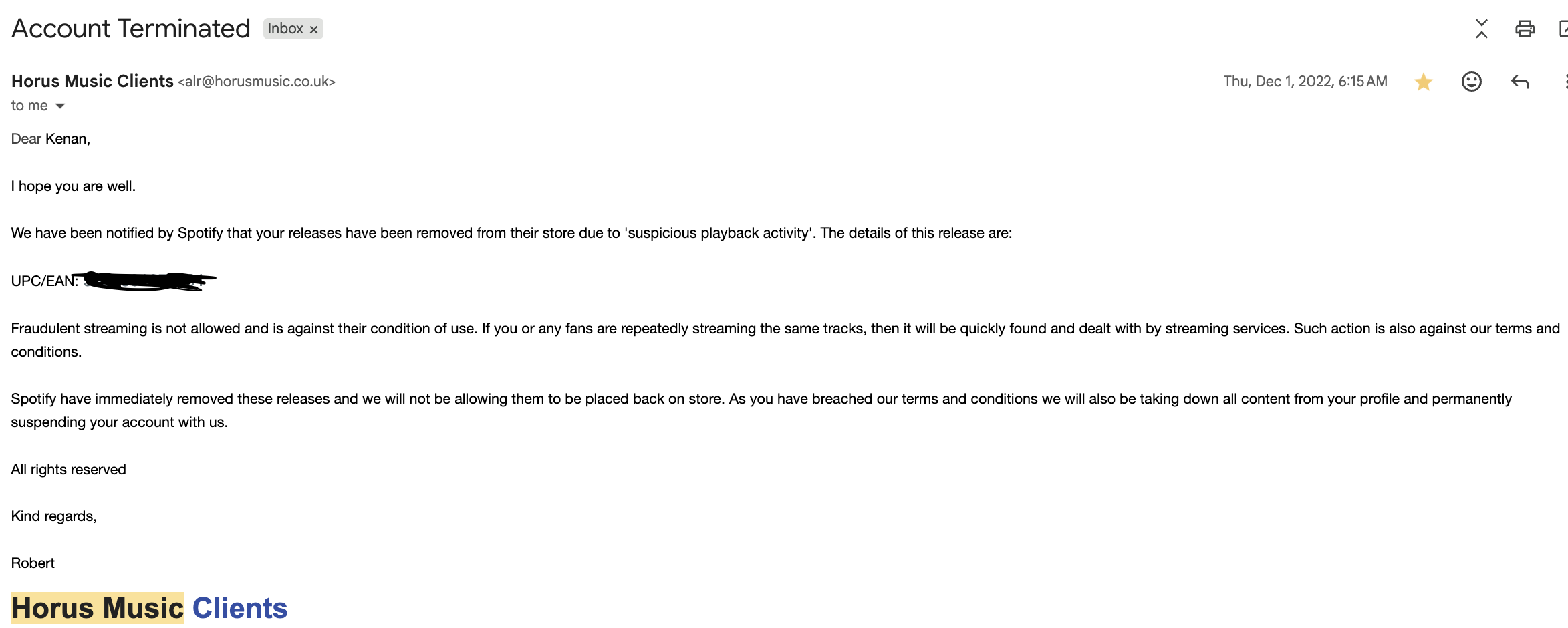

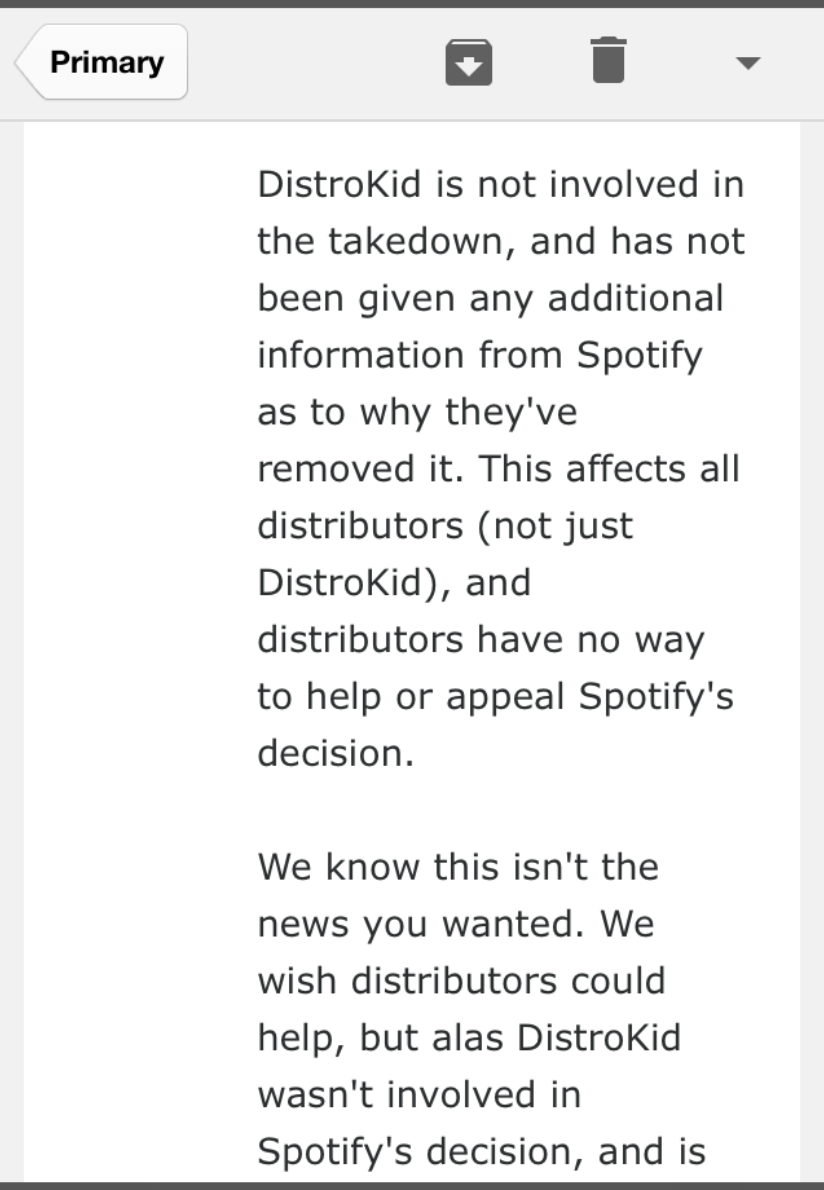

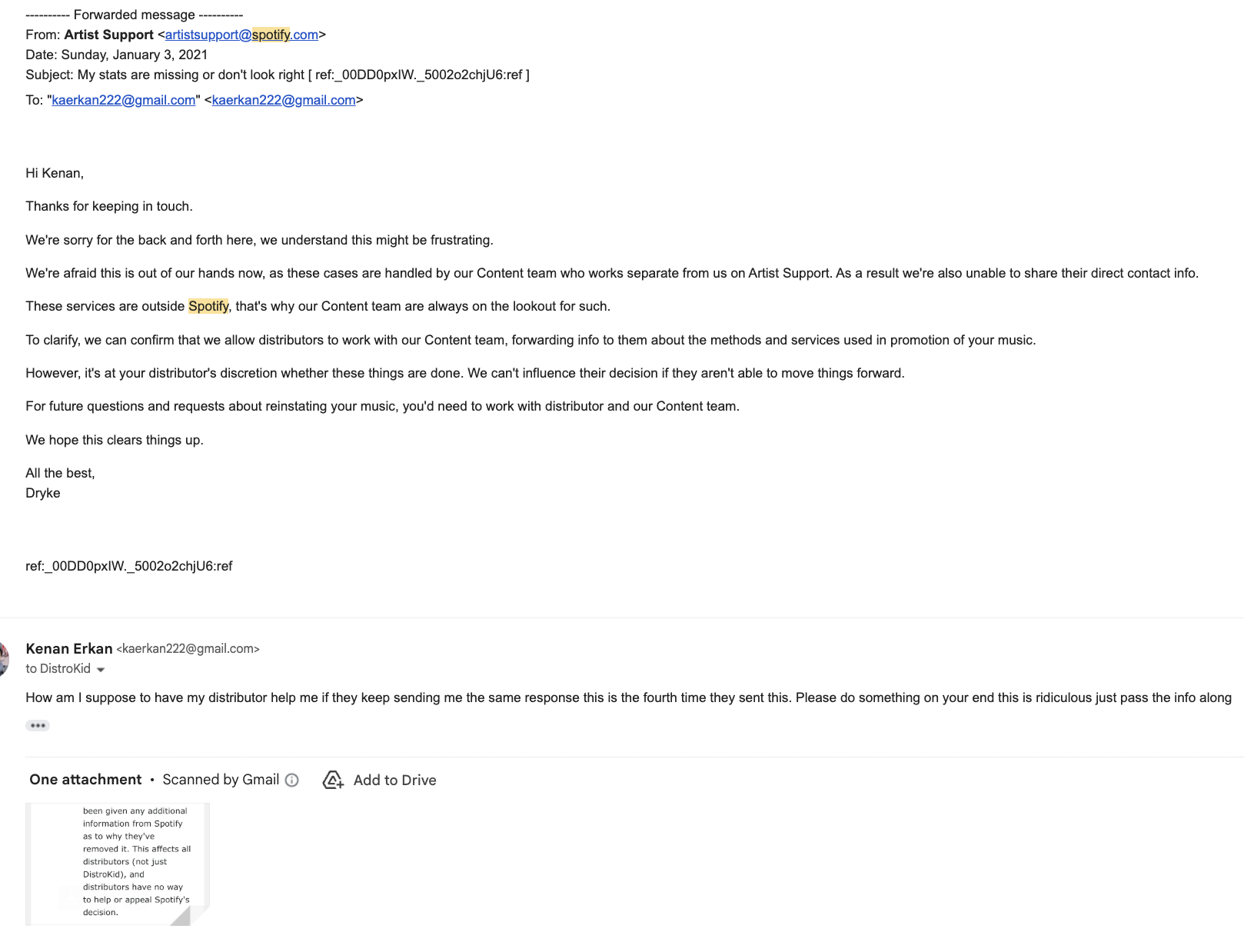

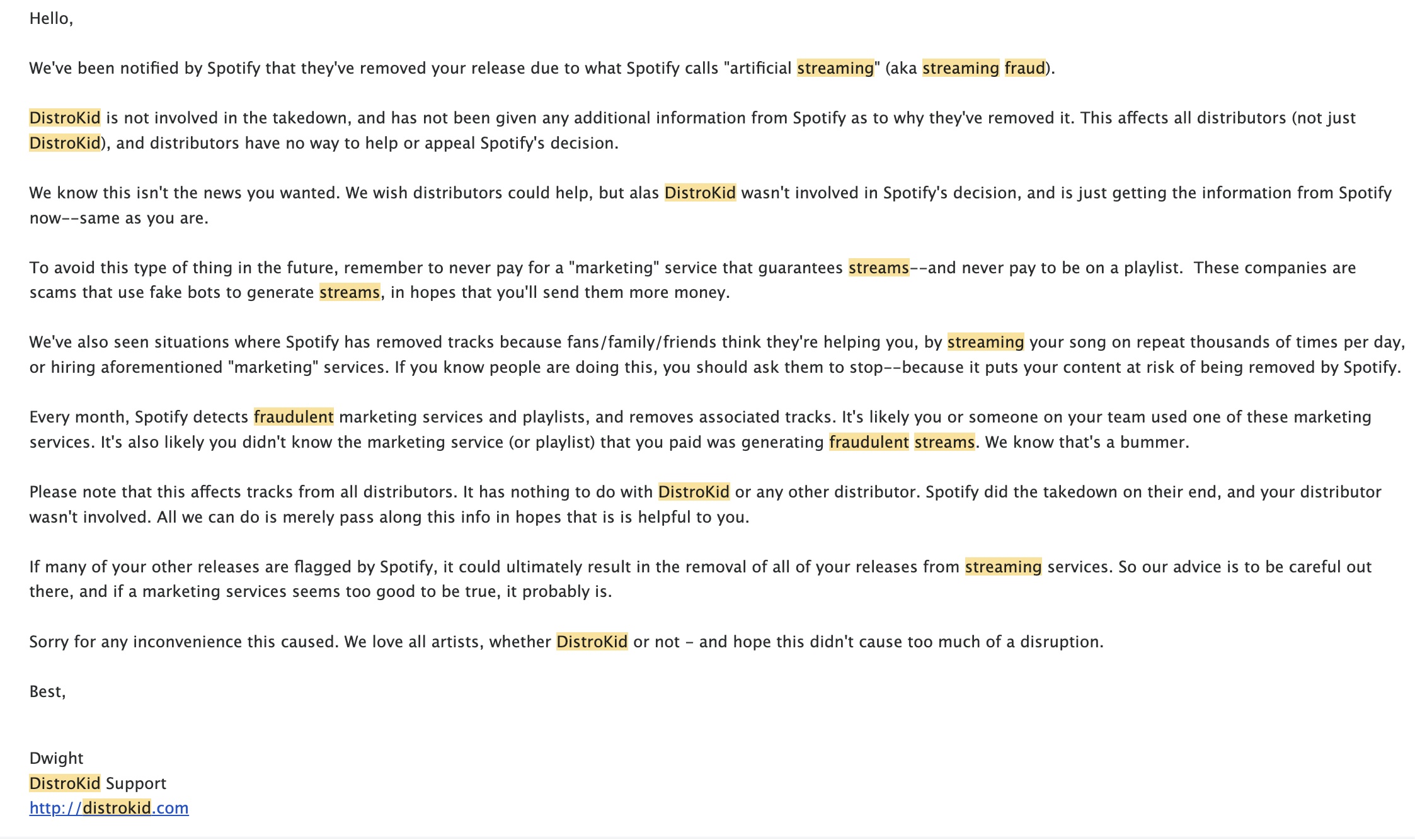

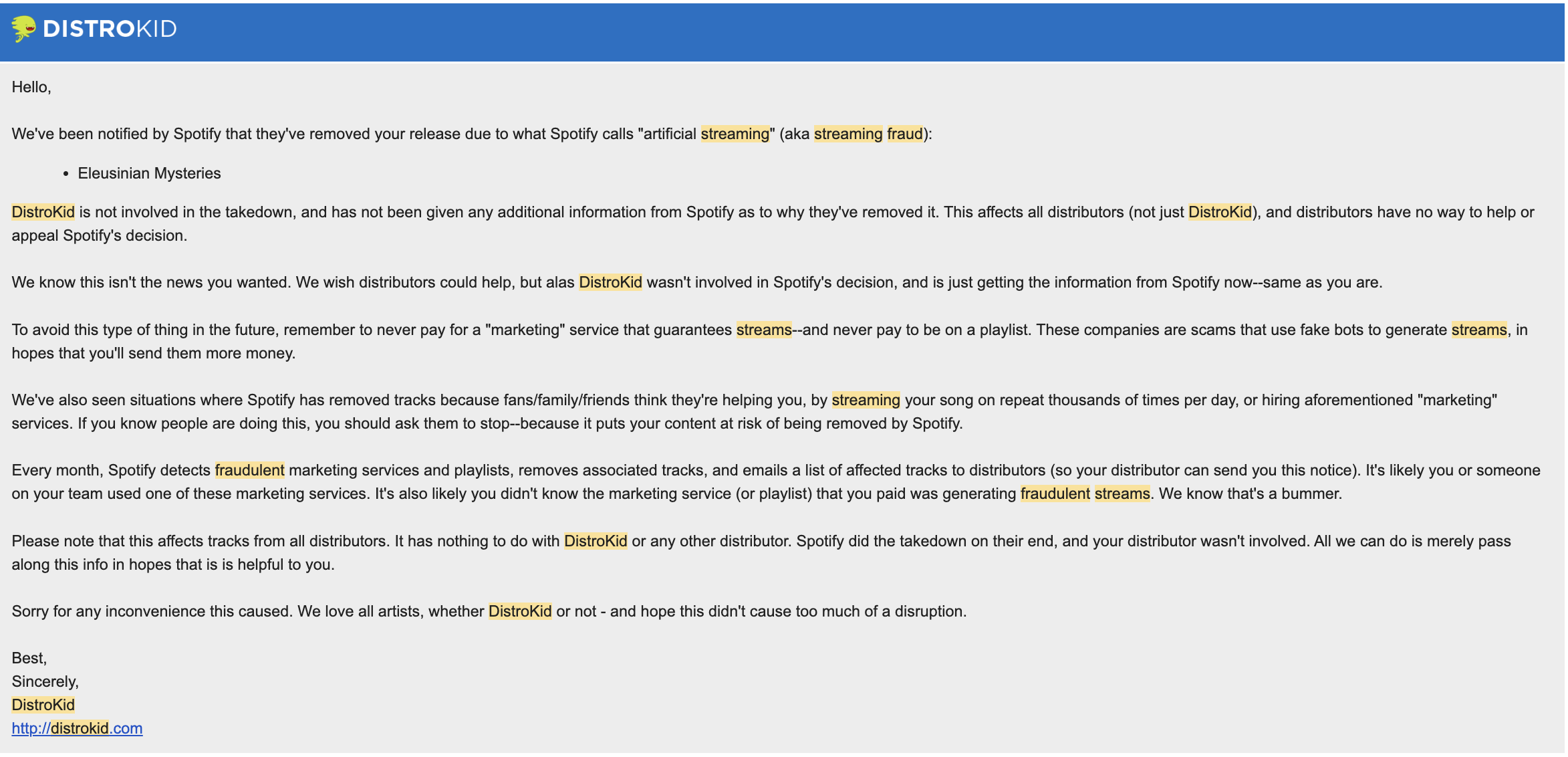

The following materials demonstrate that streaming platforms operate a centralized enforcement system in which they define what constitutes “artificial streaming,” detect it using internal and undisclosed methods, and apply penalties—including royalty removal, reduced visibility, and financial charges—without standardized requirements for evidence disclosure or a formal appeals process. These determinations are then passed through distributors such as DistroKid, TuneCore, and CD Baby, which often have no control over or visibility into the enforcement decisions. As a result, artists may face financial and professional consequences within a closed system that lacks independent oversight and consistent due process protections.

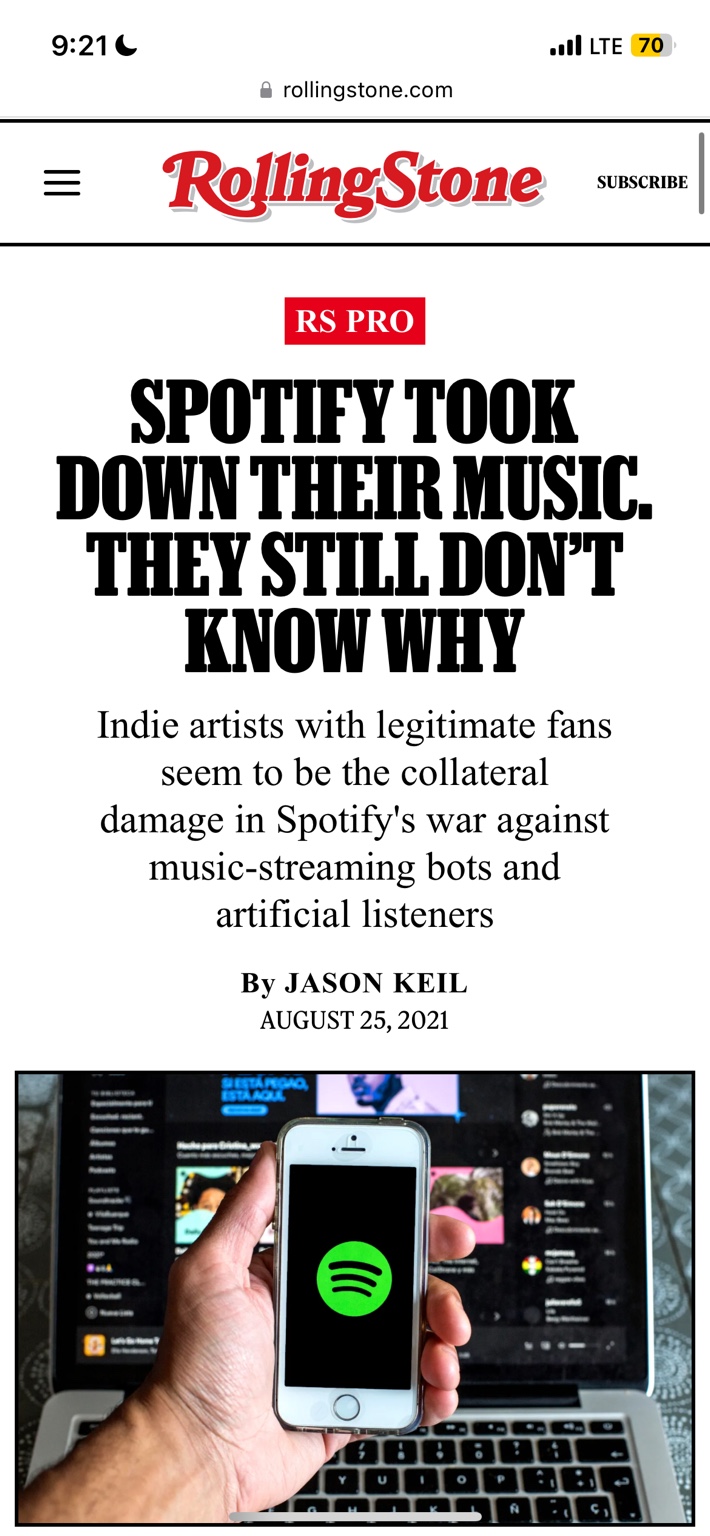

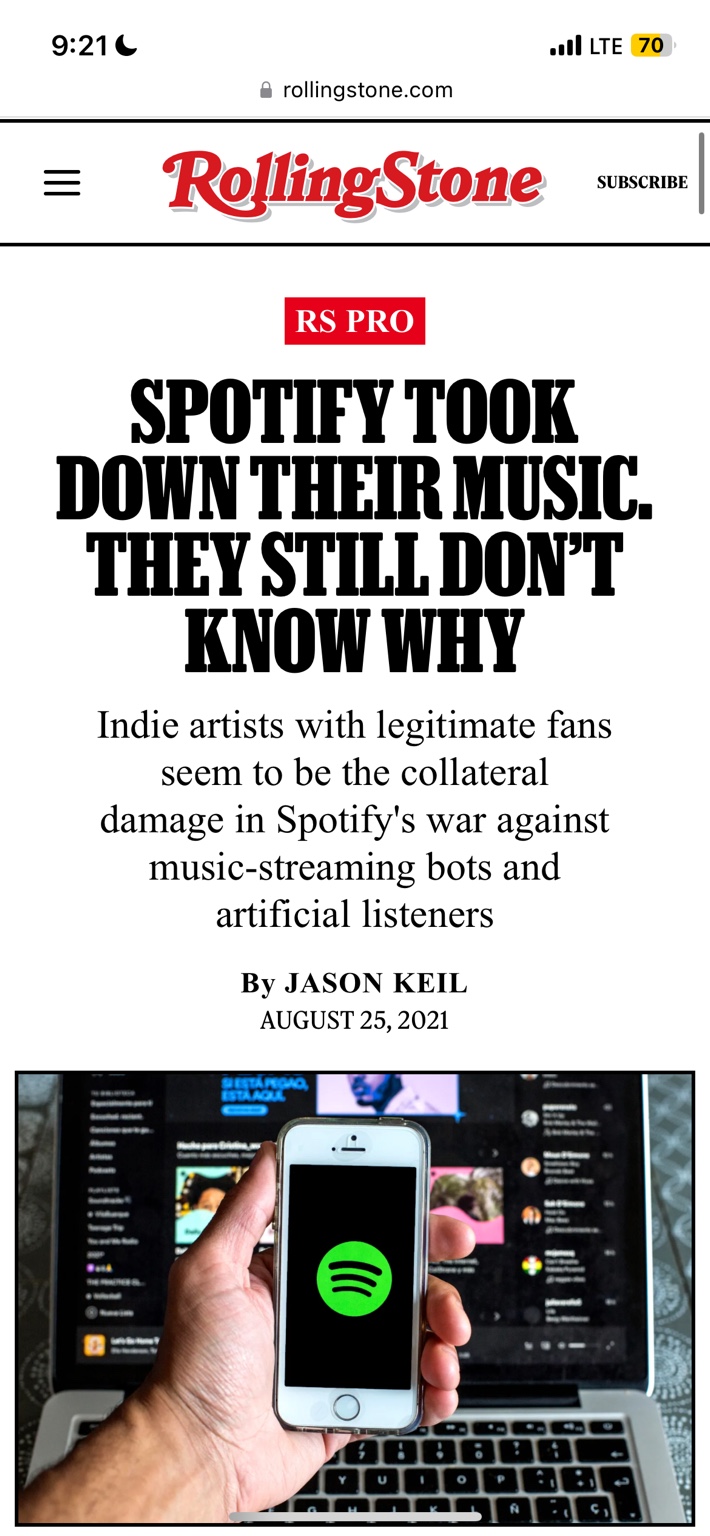

Platform enforcement policies primarily operate at the artist and distributor level, with penalties including content removal, royalty withholding, and financial charges applied directly to creators.

However, the broader ecosystem that contributes to artificial streaming—including playlist networks and third-party promotional channels—remains less transparently addressed within publicly documented enforcement processes.

This creates an imbalance in how enforcement is applied, where downstream participants (artists) bear the consequences of activity that may originate from upstream sources outside of their direct control.

The following examples illustrate how these enforcement systems function in practice.

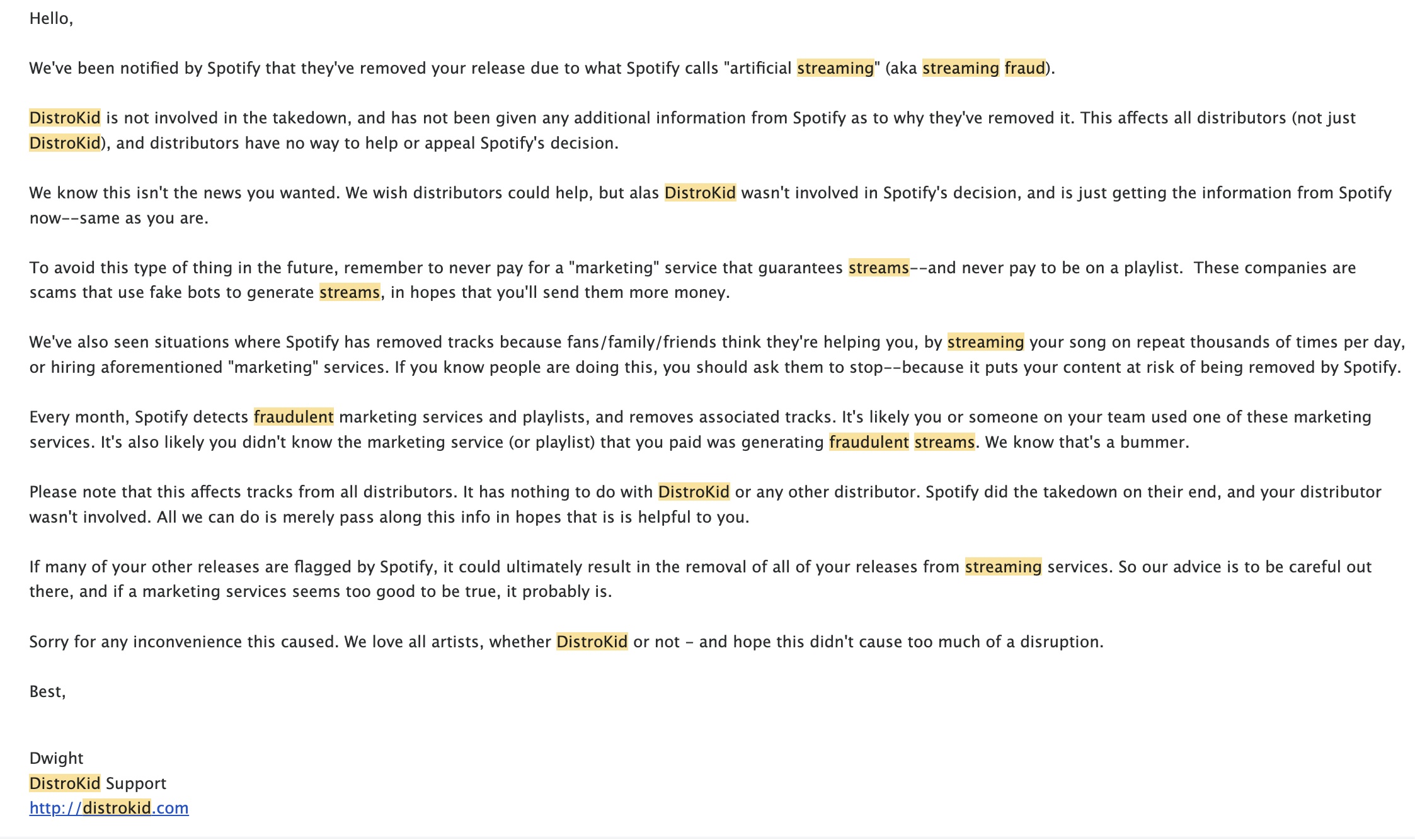

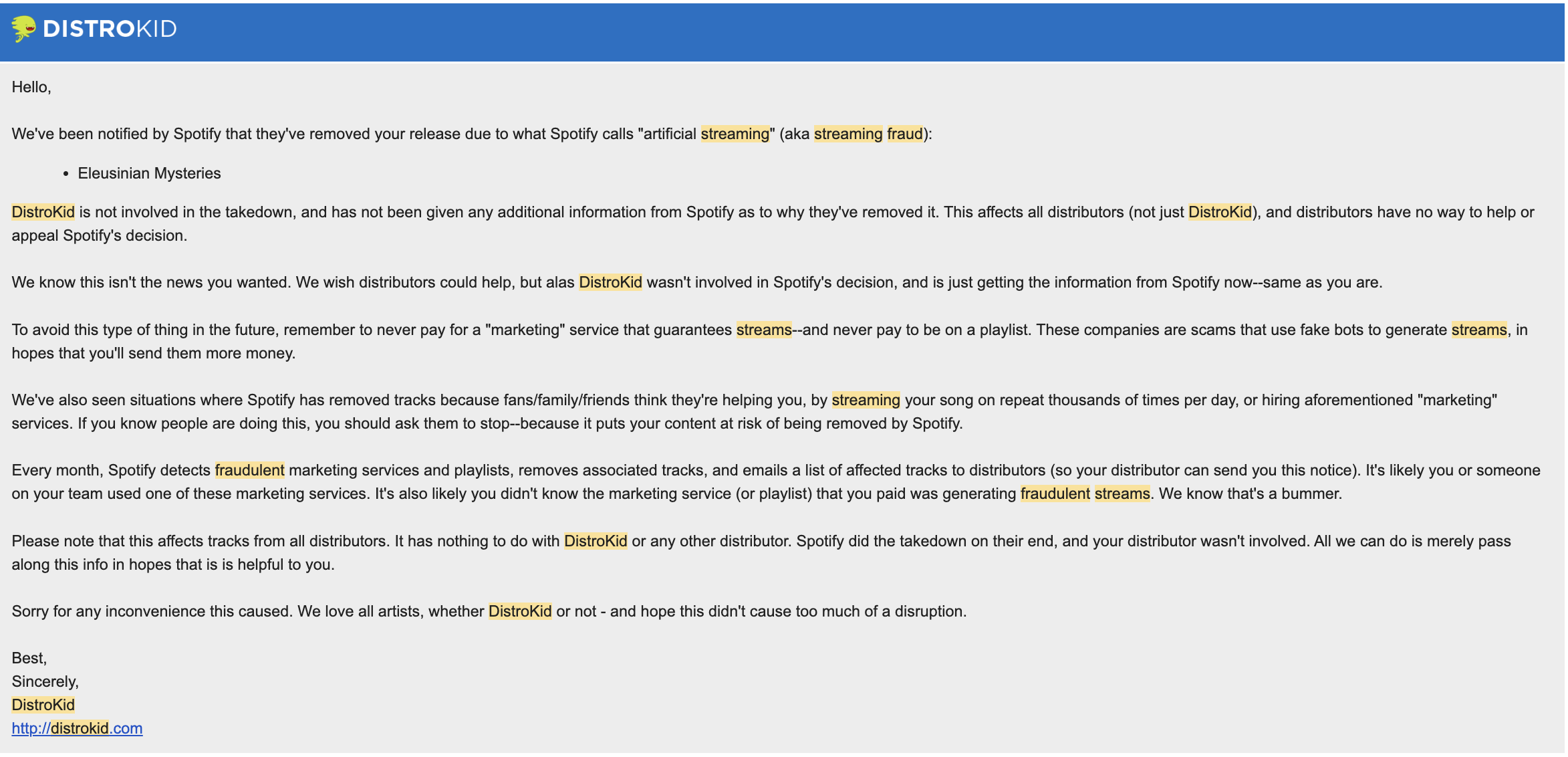

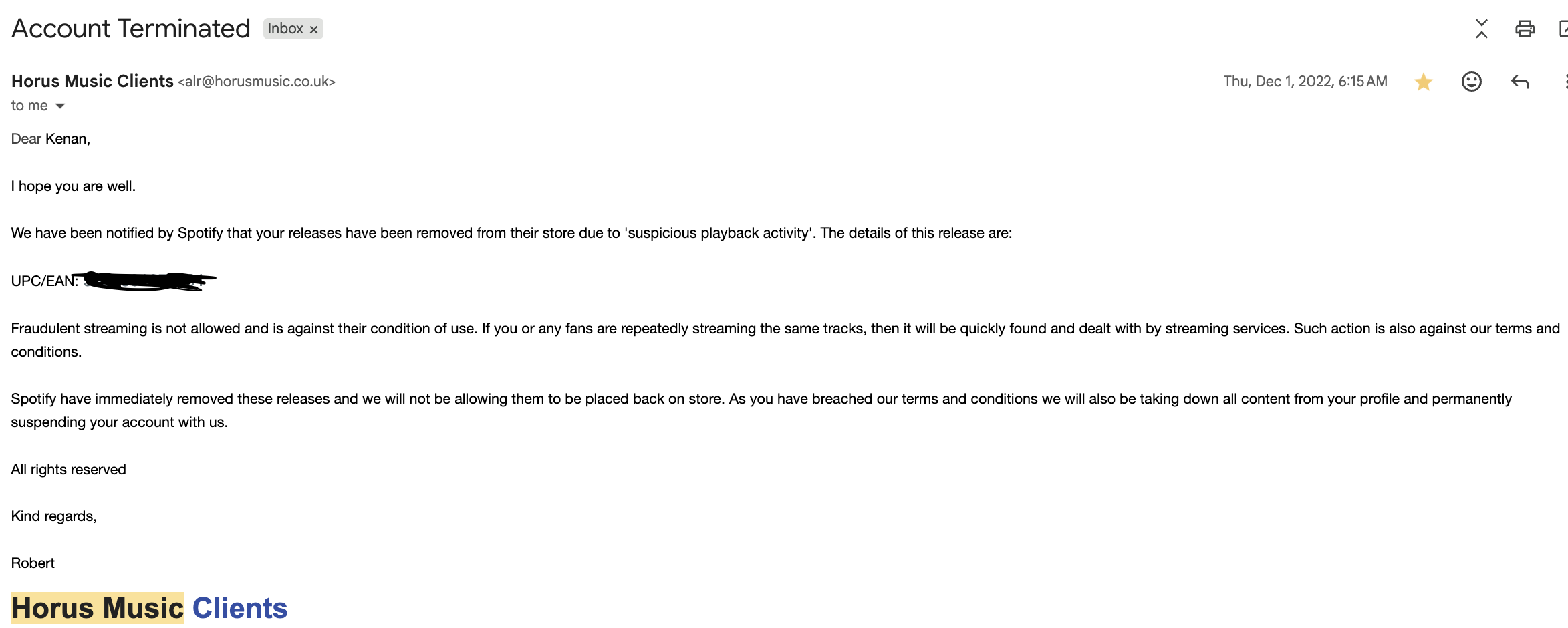

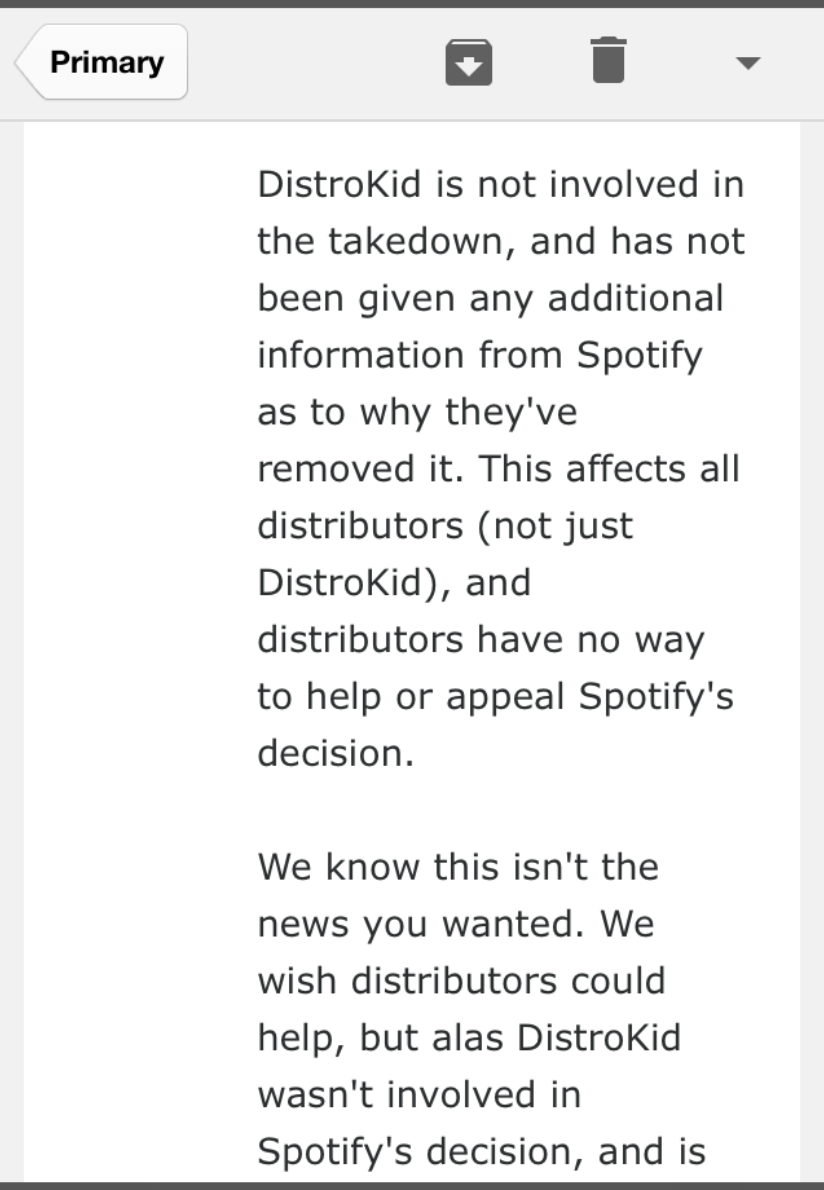

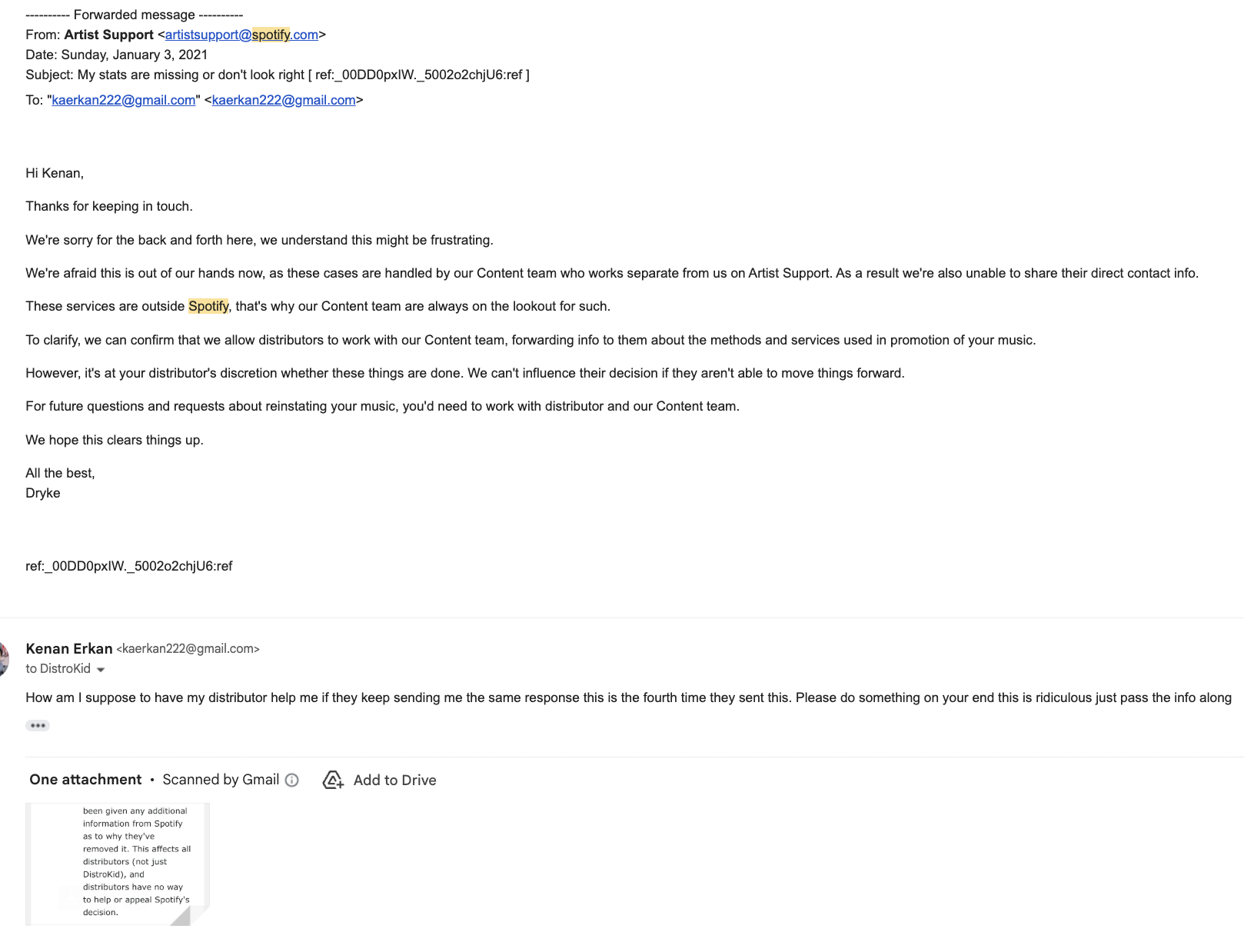

The following communications illustrate the lack of transparency, accountability, and due process between platforms and distributors.

Unregulated Enforcement Frameworks in Practice

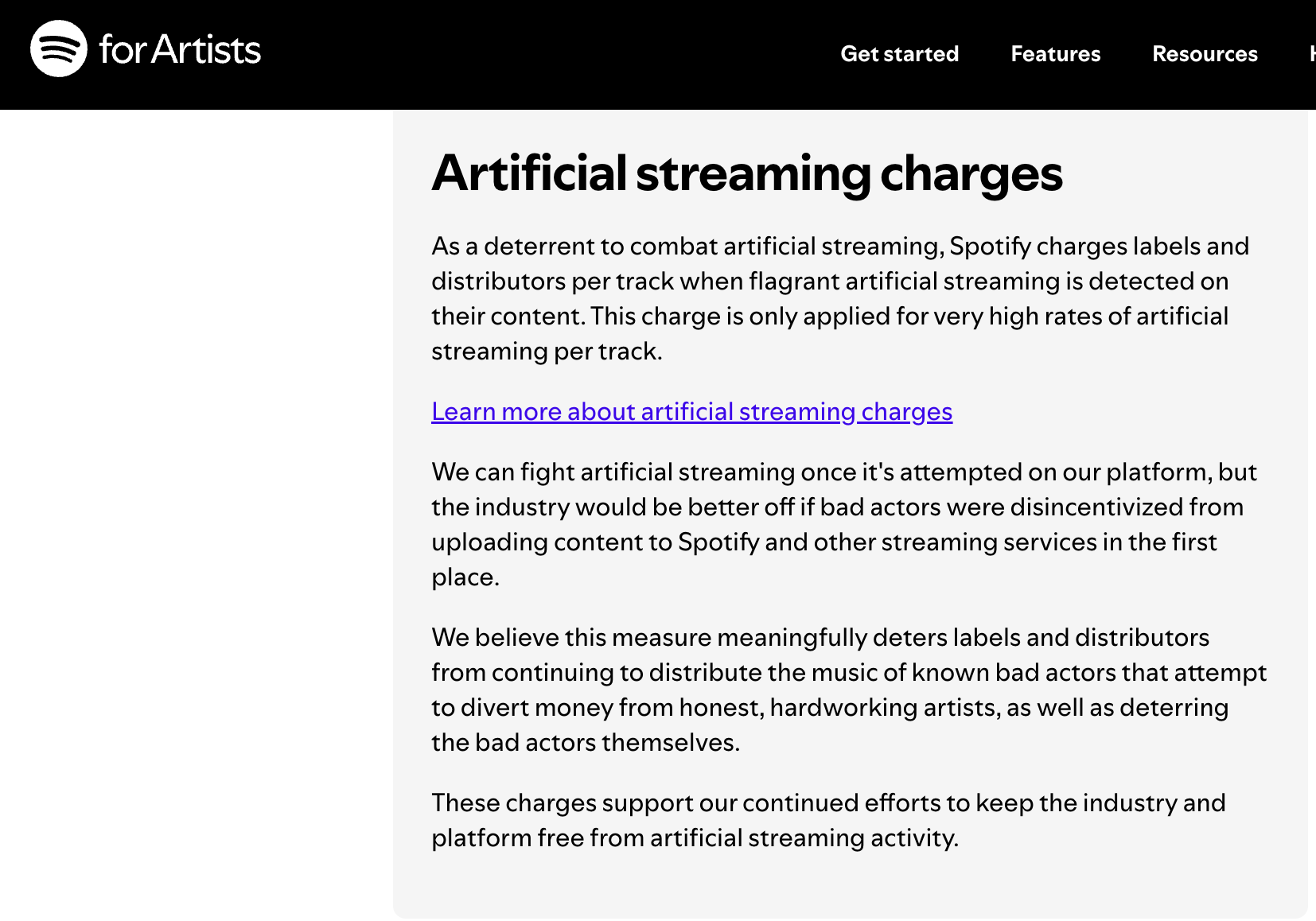

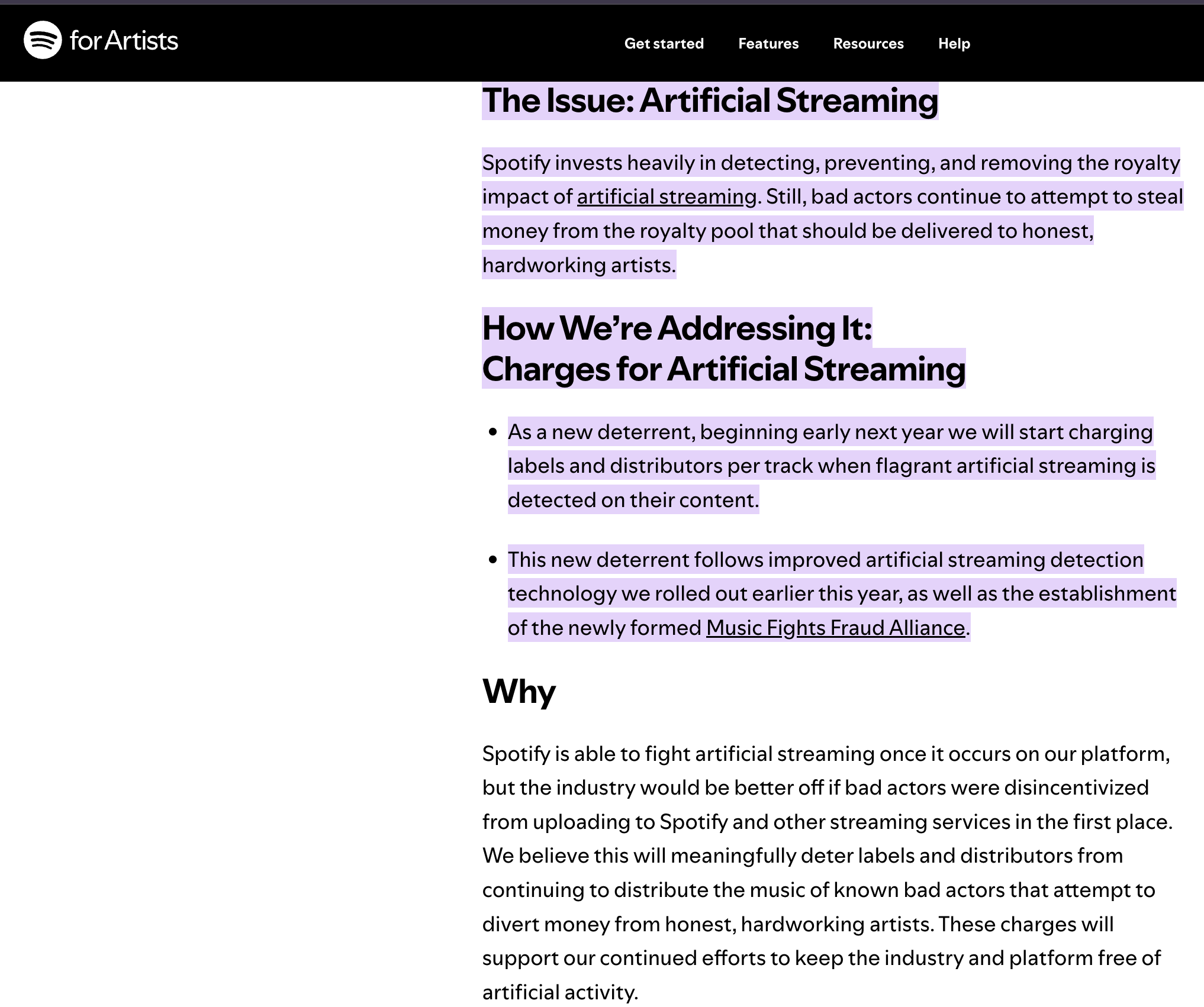

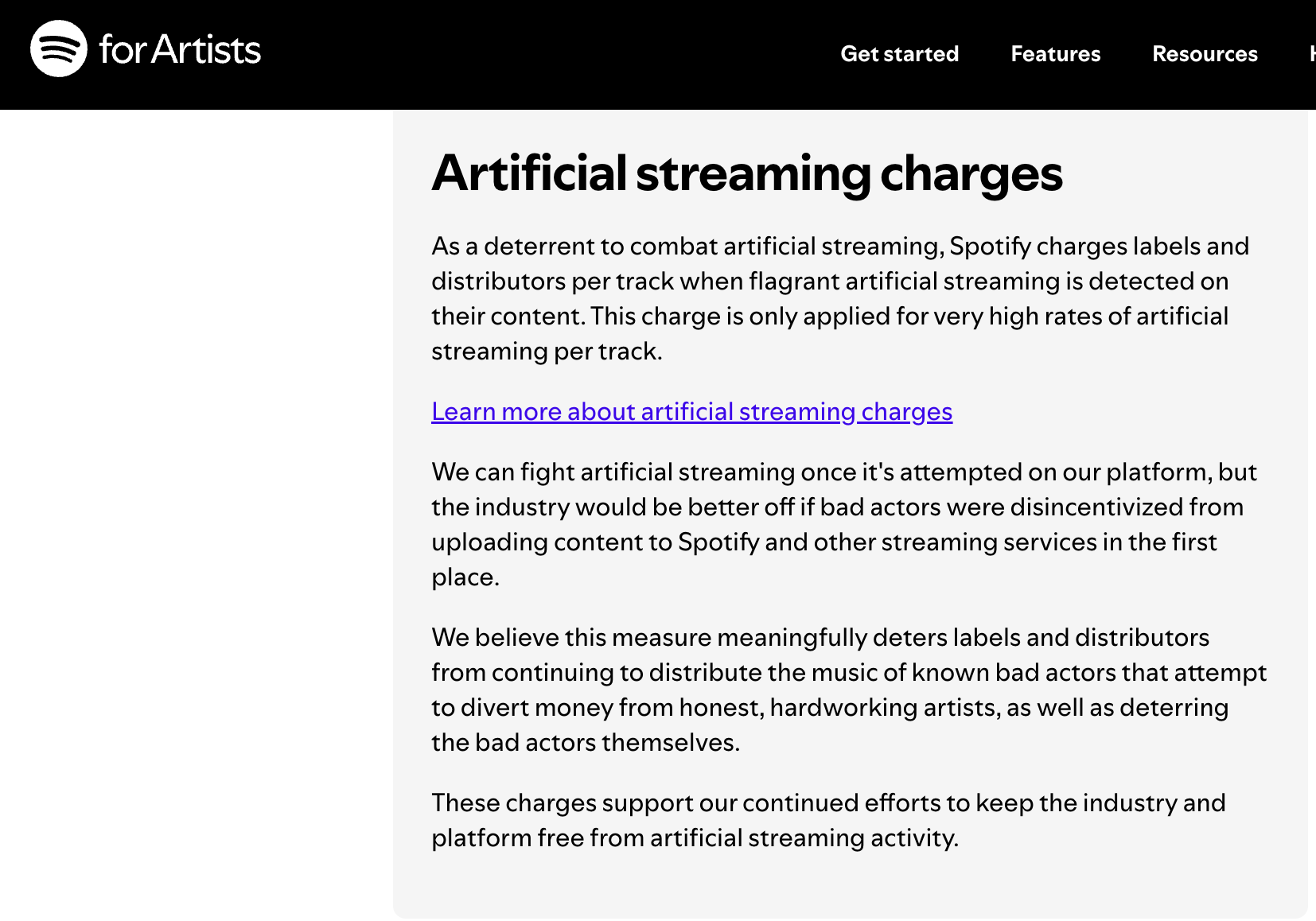

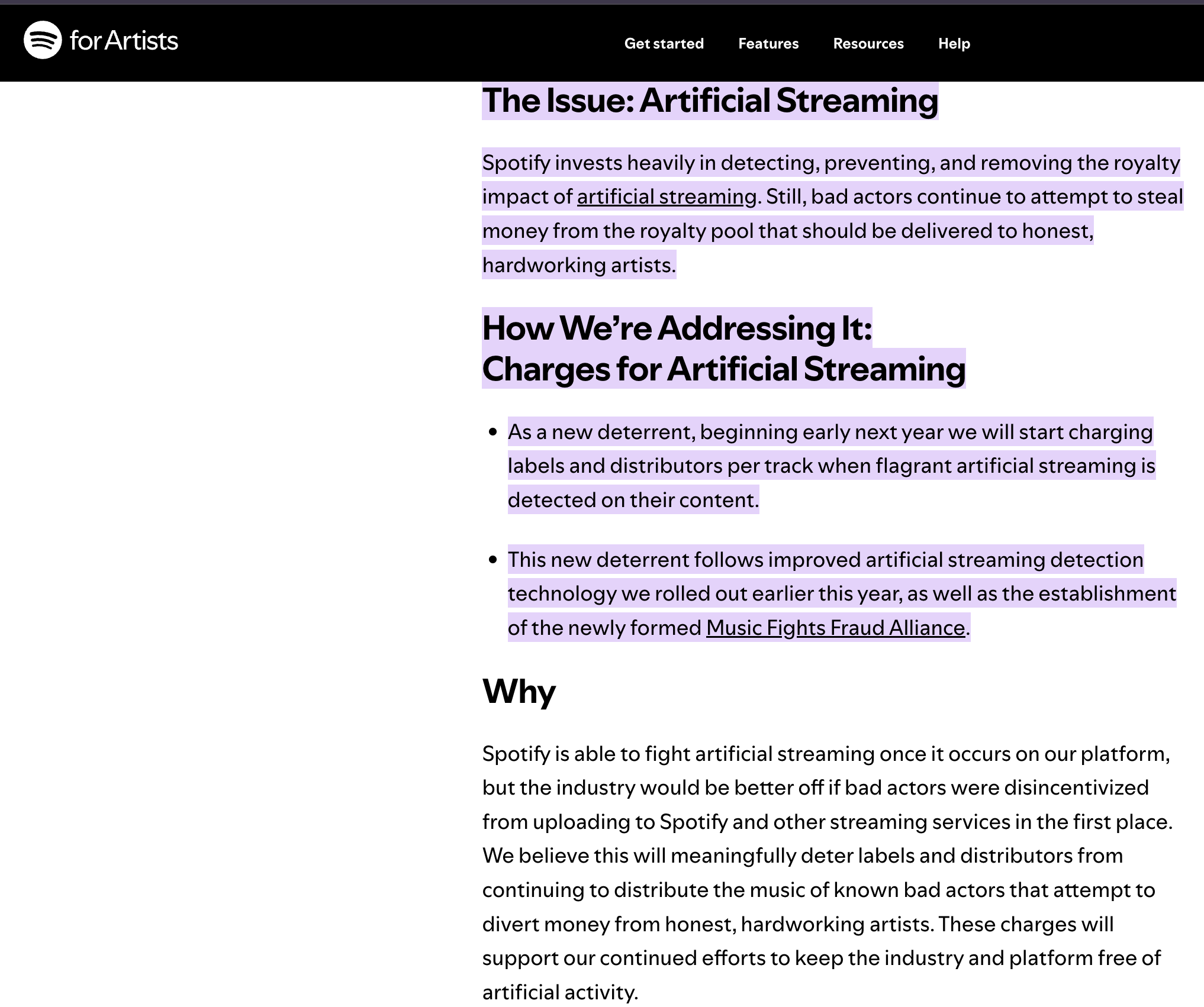

Spotify’s published policy confirms that financial penalties are applied to distributors when artificial streaming is detected. However, no public standard exists requiring evidence transparency, artist notification, or independent review prior to enforcement—demonstrating the exact regulatory gap this Act seeks to address.

Distributor-Level Financial Penalties Passed to Artists

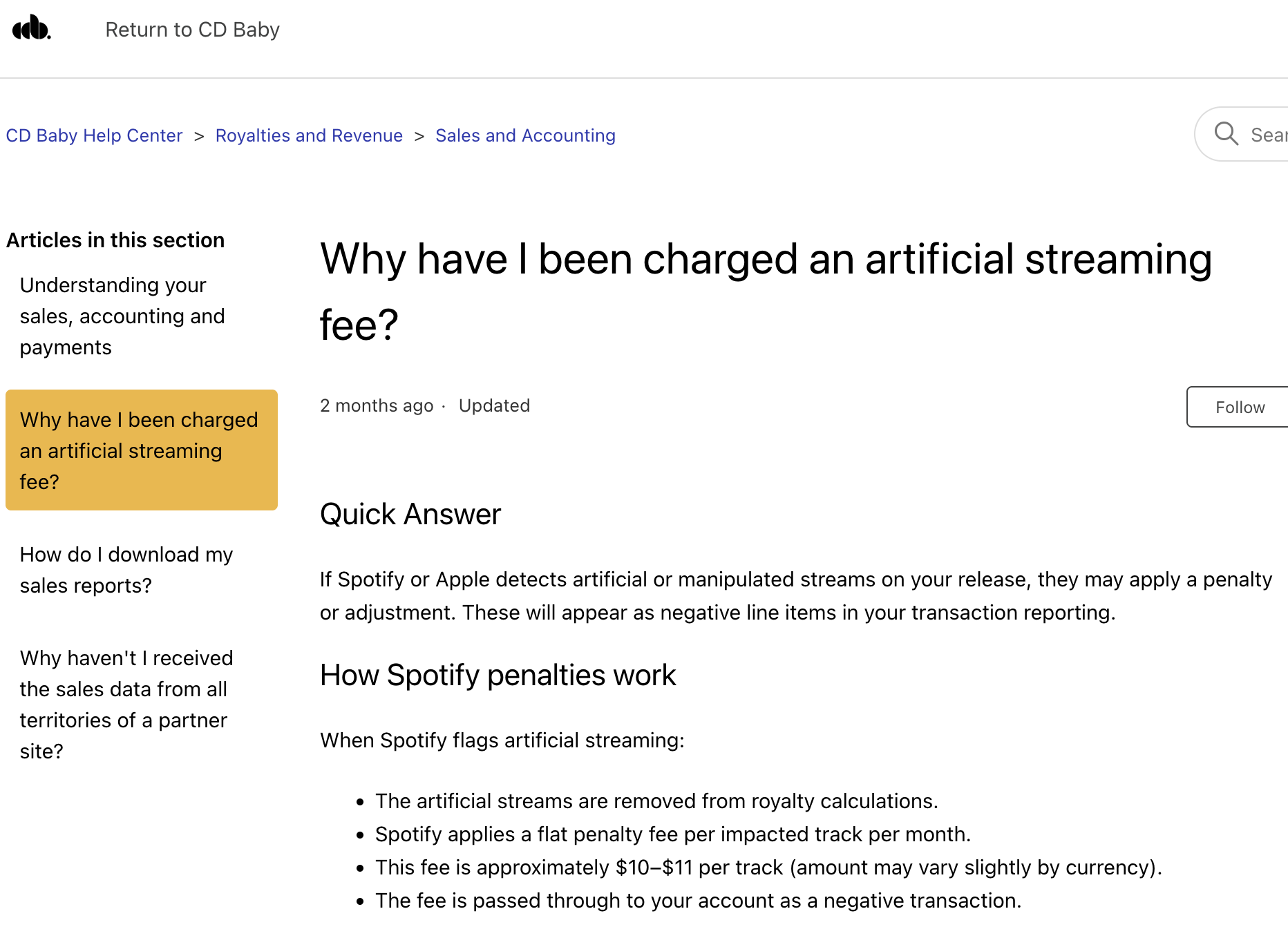

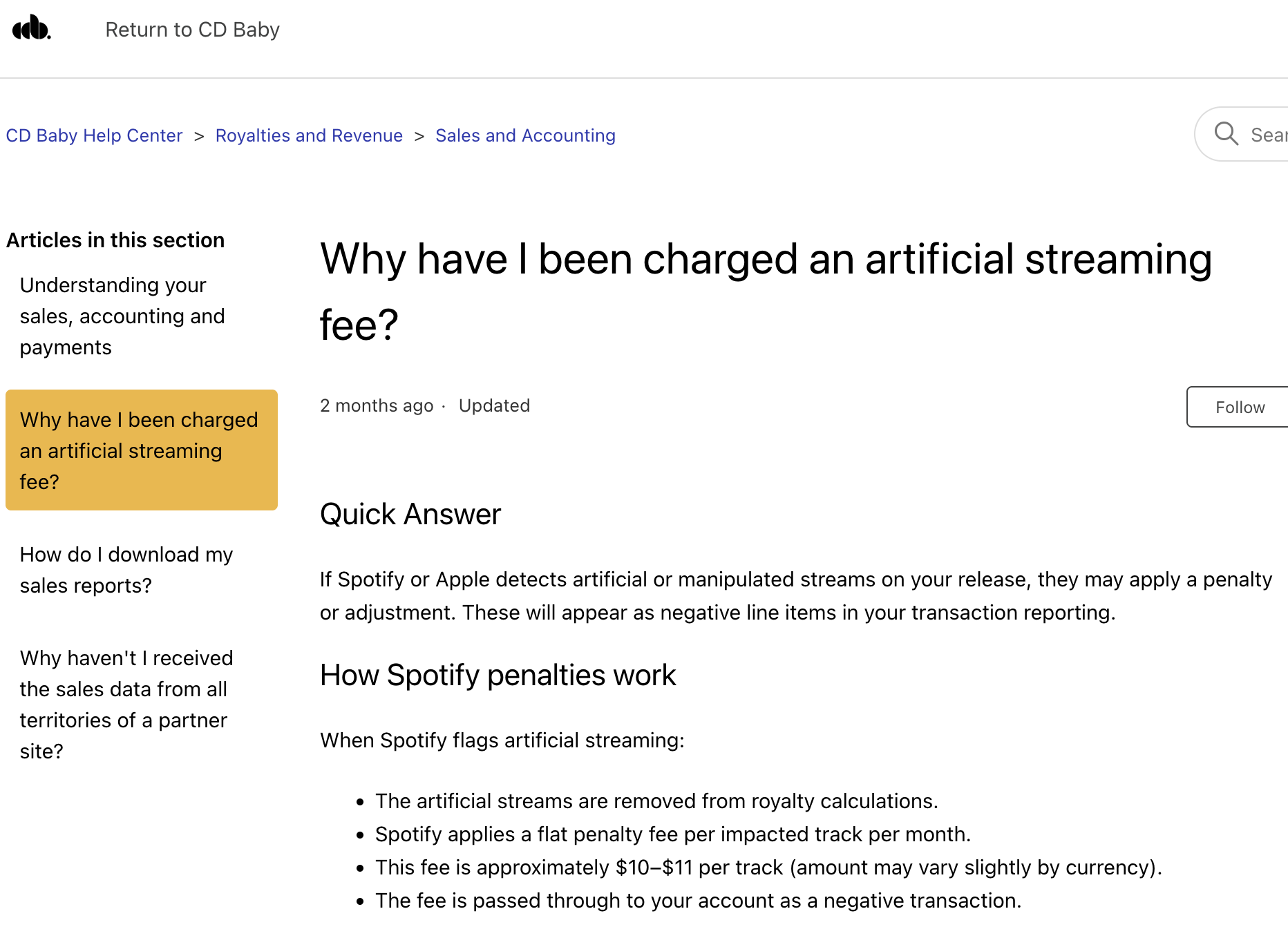

Distributor documentation confirms that when Spotify flags artificial streaming, financial penalties are applied at the track level and passed through as negative transactions in artist accounts. These penalties are triggered by platform detection systems, without standardized requirements for independent verification or artist-side dispute mechanisms.

Cross-Platform Enforcement Standardization Across Distributors

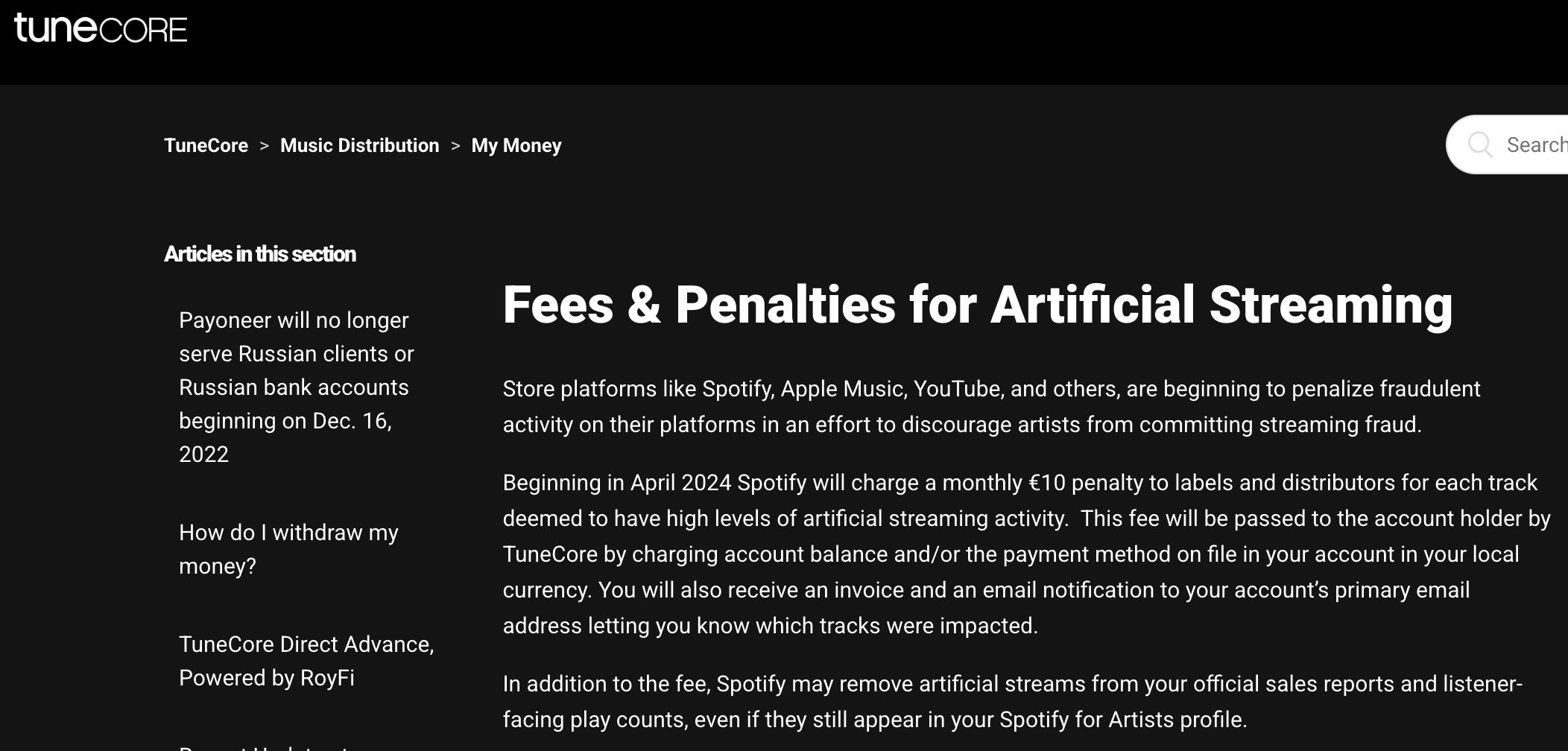

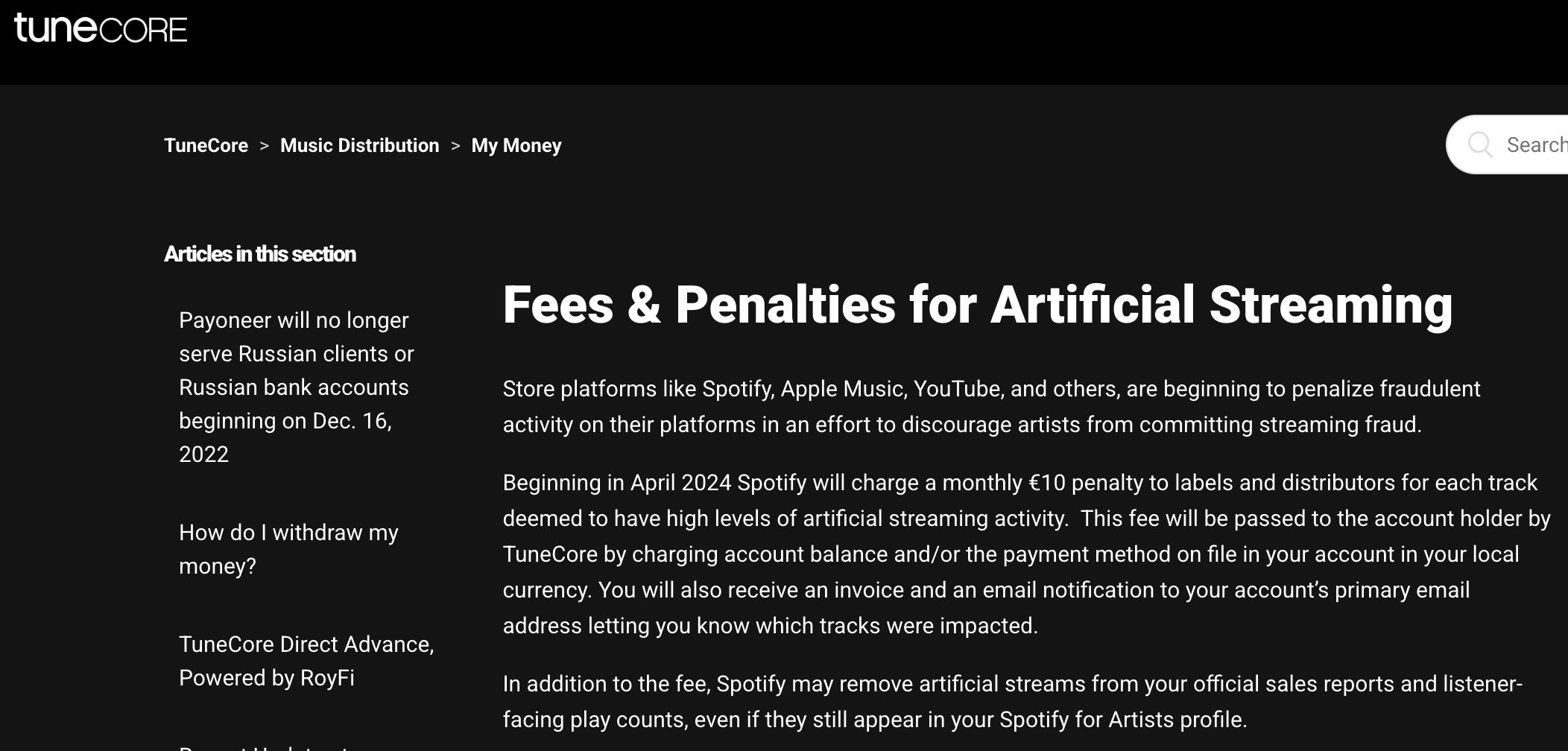

Multiple distribution platforms confirm the implementation of recurring per-track penalties tied to Spotify’s artificial streaming determinations. This reflects a shared enforcement structure across the distribution ecosystem, where platform-level decisions directly translate into financial consequences for artists.

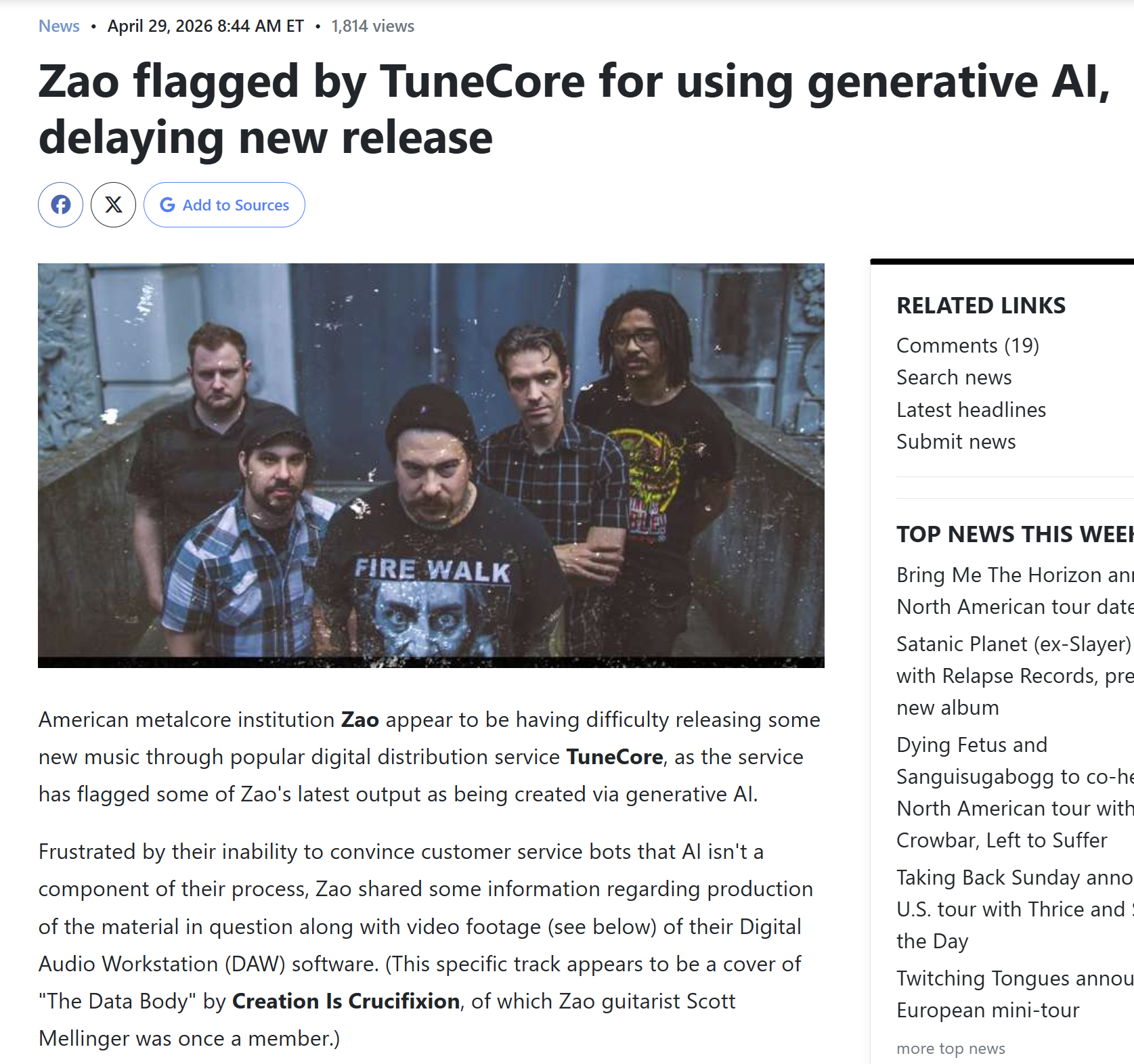

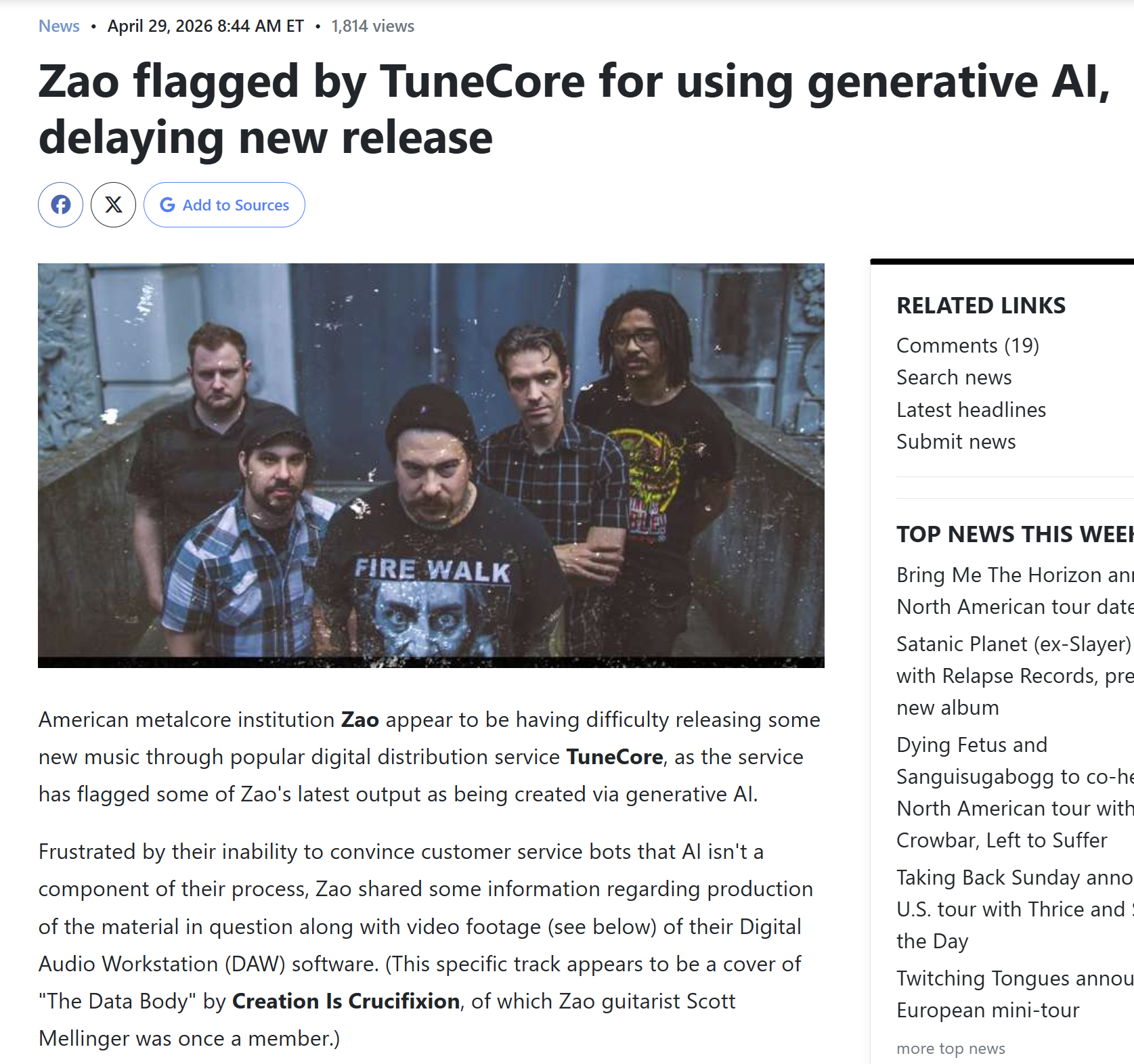

Recent cases further demonstrate the scope of this issue. In 2026, the band Zao was flagged by TuneCore for allegedly using generative AI, delaying the release of new music despite the group providing evidence of their real production process. This highlights how current enforcement systems can misidentify legitimate work, creating barriers for established artists as well as independent creators.

Automated Financial Deductions Based on Platform Flags

Artists may receive direct financial deductions labeled as “Artificial Streaming Penalty Fees” following platform-level fraud determinations. These charges are applied per track and processed automatically within artist accounts, reinforcing the lack of a standardized pre-enforcement review process.

Platform-Defined Fraud Standards and Internal Enforcement Systems

Spotify defines artificial streaming as activity that does not reflect “genuine user listening intent” and enforces this standard through internal detection, mitigation, and removal systems. However, the methodologies and thresholds used to determine violations remain undisclosed.

Total Economic and Visibility Suppression Following Enforcement

Spotify confirms that streams flagged as artificial are excluded from royalties, public metrics, and algorithmic recommendation systems. This results in both financial loss and reduced platform visibility for affected artists, compounding the impact of enforcement actions.

Royalty Thresholds and Withheld Micro-Payments

Spotify’s published policy confirms that millions of tracks generate fractional earnings that often never reach artists due to payout minimums and transaction fees. While these funds accumulate at scale, individual creators are effectively excluded from compensation—highlighting a lack of enforceable standards for minimum payout access and royalty distribution fairness.

Broad and Ambiguous Definitions of Artificial Streaming

Spotify’s guidance discourages certain forms of repeated or coordinated listening behavior, including looping or structured fan engagement strategies. However, these definitions remain broadly framed and lack clear thresholds or transparency—creating ambiguity around what constitutes legitimate listener behavior versus enforceable violations.

Unregulated Industry-Wide Enforcement Standards

Platform and distributor guidance demonstrates a consistent, shared approach to defining and penalizing artificial streaming across the digital music ecosystem. However, these aligned enforcement standards exist without independent oversight, transparent evidentiary requirements, or formalized due process—leaving artists subject to a coordinated but unregulated system of control.

Distributor Communications During the 2021 Enforcement Period:

During the 2021 wave of platform enforcement actions affecting independent artists, distributors publicly communicated in ways that did not directly address artist concerns regarding removals, appeals, or financial accountability.

The following examples reflect public-facing messaging from DistroKid during this period.

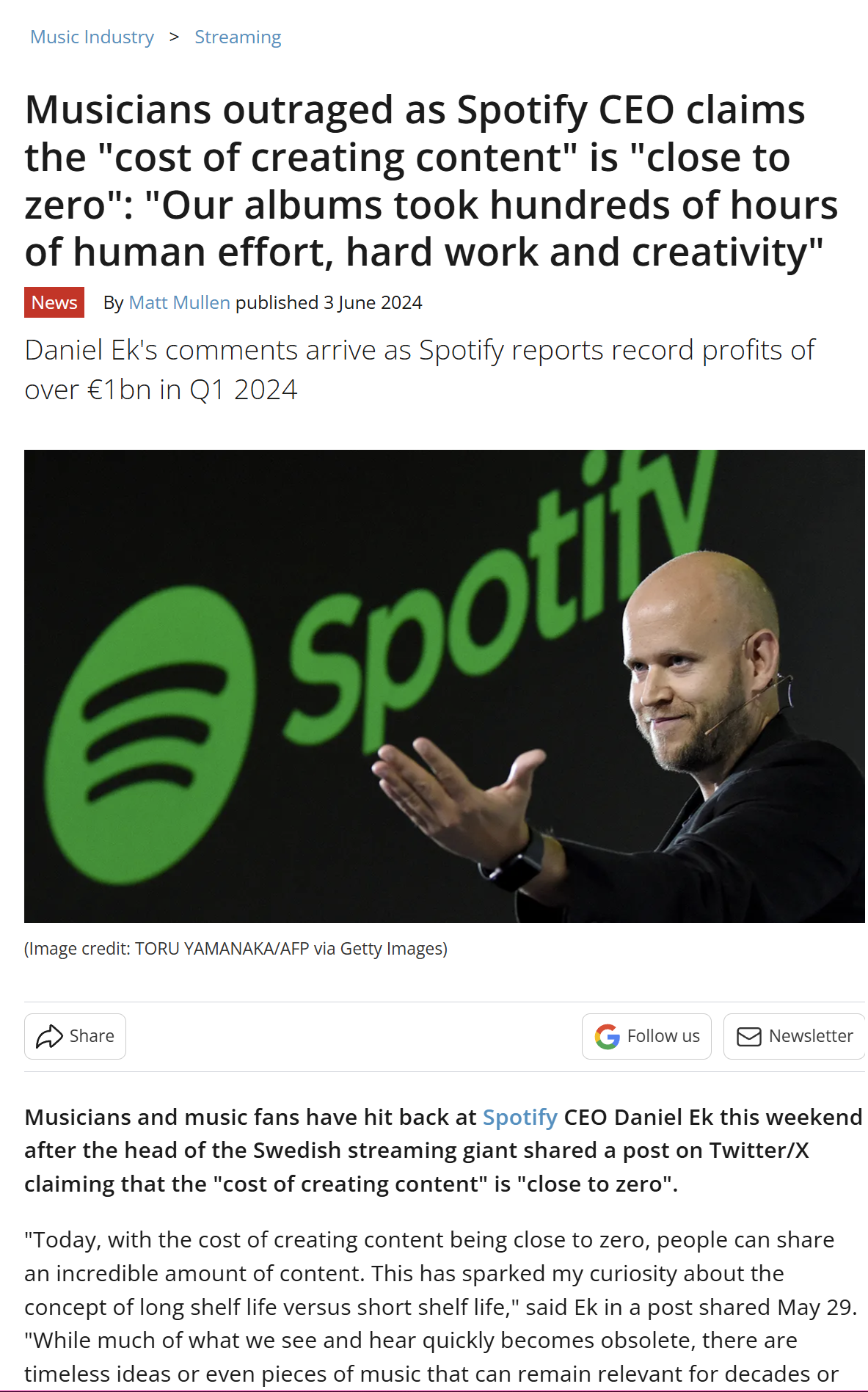

Executive Commentary on Creative Value:

Public statements from leadership at Spotify have contributed to broader discussion around how creative work is valued within streaming ecosystems.

In multiple instances, commentary framing music as “content” and minimizing the cost of creation has drawn criticism from artists and industry observers.

Notably, these enforcement actions were applied to a catalog of formally registered works with clear authorship and documentation, demonstrating that existing systems do not reliably distinguish between legitimate creators and suspected fraudulent activity.

This raises broader concerns about how enforcement is applied across the streaming ecosystem, particularly when actions are taken without transparent standards, consistent verification processes, or meaningful opportunities for review.

As it stands, these systems place the burden of risk on artists rather than ensuring accountability within the platforms themselves.

1,012

The Issue

Independent musicians are being erased from streaming platforms without warning, evidence, or appeal.

I’m Kenan Ali Erkan — an independent artist, producer, and audio engineer from Rochester, NY, releasing music under the name Ali Prod®.

In 2023, a single fraud accusation from Spotify — with no evidence and no chance to respond — resulted in my entire catalog being removed across all platforms.

No warning.

No appeal.

No royalties.

Years of work erased overnight.

And I’m not alone.

Behind the scenes, artists are being flagged, removed, and silenced by automated systems with no transparency and no real oversight. Meanwhile, the same ecosystem continues to profit from bot traffic, fraudulent playlists, and artificial engagement.

That’s why I created the Artist Rights & Platform Accountability Act.

We’ve now reached 1,000+ supporters, and this is just the beginning.

I am fully committed to pushing this forward — not just as an idea, but as a real legislative solution to protect independent artists.

If you believe in this, keep sharing, commenting, and spreading awareness.

We’re building something that matters.

Before being removed without warning or appeal, my work had already reached a global audience — generating hundreds of thousands of streams, tens of thousands of listeners, and engagement across 100+ countries. This level of engagement was built over time through consistent listener activity, independent social media networking, word-of-mouth growth, and my involvement in online communities — including the lo-fi, vaporwave, indie electronic, and beat-making scenes.

The Artist Rights & Platform Accountability Act is a proposed federal legislative framework designed to address widespread abuses affecting independent musicians on digital streaming platforms (DSPs) and distribution services.

Drafted by independent artist Kenan Ali Erkan (Ali Prod®) in response to wrongful catalog removals and unverified fraud accusations, the act seeks to establish enforceable standards of due process, transparency, and accountability across the digital music ecosystem. Its goal is to create a more equitable system that treats music as labor and protects the creators who power the industry.

The act focuses on four primary pillars:

Accountability, Royalty Oversight, Economic Protection, and Artist Empowerment.

Core Provisions:

• Clean Streaming Ecosystem Requirement:

Platforms and distributors must maintain a verifiably clean digital streaming environment, including the active detection and removal of fraudulent playlists, bot networks, and artificial traffic sources.

Artists cannot be penalized for automated or artificial streams outside of their control, and platforms are prohibited from shifting responsibility for systemic failures onto creators without clear, verifiable evidence of direct involvement.

[Section 3.3, Section 4.1]

• Due Process Before Removal:

Platforms (DSPs) and distributors are prohibited from removing music or terminating accounts without prior written notice, specific documented evidence of alleged fraud, and a meaningful opportunity for the artist to respond and appeal.

[Section 2.1]

• Establishment of NOMES (National Organization for Music Economic Safety):

The act proposes the creation of an independent oversight body responsible for monitoring fraud investigations, auditing platforms and distributors, and administering a formal appeals process for artists. NOMES operates in coordination with existing regulatory frameworks while maintaining independent authority.

[Section 5.1]

• Innocent Until Proven Fraudulent:

The burden of proof lies with platforms and distributors—not the artist. Any enforcement action must be supported by independently verifiable evidence of intentional manipulation or fraud. Automated suspicion alone is not sufficient grounds for removal or penalty.

[Section 2.5]

• Royalty Protection & Transparency:

Platforms must provide clear, itemized royalty reports and are prohibited from withholding or seizing royalties without documented cause. In cases of wrongful enforcement, artists are entitled to full recovery of withheld earnings and associated damages.

[Section 3.1]

• Metadata Protection:

The act safeguards against unauthorized metadata tampering, ensuring that artist credits, ownership, and attribution cannot be altered without consent. Platforms must provide mechanisms to restore and verify accurate records.

[Section 9.1]

• Cultural Context Clause:

The act formally recognizes that organic listener behavior—such as repeated listening or “looping”—is a legitimate form of engagement. High stream counts alone cannot be used as evidence of fraud unless automated manipulation is clearly proven.

[Section 1.3]

Key Definitions:

• Locked-Out Artist:

An artist denied access to their DSP or distributor accounts without a proper appeals process or documented justification.

[Section 1 — Definitions]

• Fraudulent Playlist:

Playlists operated by bad actors (including bot-driven or pay-for-play systems) used to artificially inflate streams, which platforms are required to actively detect and remove.

[Section 8.2]

• Shadowbanning:

A form of hidden suppression where music remains technically available but is deliberately excluded from search results, recommendations, or algorithmic visibility. This practice is prohibited under the act.

[Section 8.4]

Read the full legislation:

AliProd.Net

https://aliprod.net/artist-rights-act

Contact:

AliProd.Net@gmail.com

Documented Evidence:

Evidence Summary

The following materials demonstrate that streaming platforms operate a centralized enforcement system in which they define what constitutes “artificial streaming,” detect it using internal and undisclosed methods, and apply penalties—including royalty removal, reduced visibility, and financial charges—without standardized requirements for evidence disclosure or a formal appeals process. These determinations are then passed through distributors such as DistroKid, TuneCore, and CD Baby, which often have no control over or visibility into the enforcement decisions. As a result, artists may face financial and professional consequences within a closed system that lacks independent oversight and consistent due process protections.

Platform enforcement policies primarily operate at the artist and distributor level, with penalties including content removal, royalty withholding, and financial charges applied directly to creators.

However, the broader ecosystem that contributes to artificial streaming—including playlist networks and third-party promotional channels—remains less transparently addressed within publicly documented enforcement processes.

This creates an imbalance in how enforcement is applied, where downstream participants (artists) bear the consequences of activity that may originate from upstream sources outside of their direct control.

The following examples illustrate how these enforcement systems function in practice.

The following communications illustrate the lack of transparency, accountability, and due process between platforms and distributors.

Unregulated Enforcement Frameworks in Practice

Spotify’s published policy confirms that financial penalties are applied to distributors when artificial streaming is detected. However, no public standard exists requiring evidence transparency, artist notification, or independent review prior to enforcement—demonstrating the exact regulatory gap this Act seeks to address.

Distributor-Level Financial Penalties Passed to Artists

Distributor documentation confirms that when Spotify flags artificial streaming, financial penalties are applied at the track level and passed through as negative transactions in artist accounts. These penalties are triggered by platform detection systems, without standardized requirements for independent verification or artist-side dispute mechanisms.

Cross-Platform Enforcement Standardization Across Distributors

Multiple distribution platforms confirm the implementation of recurring per-track penalties tied to Spotify’s artificial streaming determinations. This reflects a shared enforcement structure across the distribution ecosystem, where platform-level decisions directly translate into financial consequences for artists.

Recent cases further demonstrate the scope of this issue. In 2026, the band Zao was flagged by TuneCore for allegedly using generative AI, delaying the release of new music despite the group providing evidence of their real production process. This highlights how current enforcement systems can misidentify legitimate work, creating barriers for established artists as well as independent creators.

Automated Financial Deductions Based on Platform Flags

Artists may receive direct financial deductions labeled as “Artificial Streaming Penalty Fees” following platform-level fraud determinations. These charges are applied per track and processed automatically within artist accounts, reinforcing the lack of a standardized pre-enforcement review process.

Platform-Defined Fraud Standards and Internal Enforcement Systems

Spotify defines artificial streaming as activity that does not reflect “genuine user listening intent” and enforces this standard through internal detection, mitigation, and removal systems. However, the methodologies and thresholds used to determine violations remain undisclosed.

Total Economic and Visibility Suppression Following Enforcement

Spotify confirms that streams flagged as artificial are excluded from royalties, public metrics, and algorithmic recommendation systems. This results in both financial loss and reduced platform visibility for affected artists, compounding the impact of enforcement actions.

Royalty Thresholds and Withheld Micro-Payments

Spotify’s published policy confirms that millions of tracks generate fractional earnings that often never reach artists due to payout minimums and transaction fees. While these funds accumulate at scale, individual creators are effectively excluded from compensation—highlighting a lack of enforceable standards for minimum payout access and royalty distribution fairness.

Broad and Ambiguous Definitions of Artificial Streaming

Spotify’s guidance discourages certain forms of repeated or coordinated listening behavior, including looping or structured fan engagement strategies. However, these definitions remain broadly framed and lack clear thresholds or transparency—creating ambiguity around what constitutes legitimate listener behavior versus enforceable violations.

Unregulated Industry-Wide Enforcement Standards

Platform and distributor guidance demonstrates a consistent, shared approach to defining and penalizing artificial streaming across the digital music ecosystem. However, these aligned enforcement standards exist without independent oversight, transparent evidentiary requirements, or formalized due process—leaving artists subject to a coordinated but unregulated system of control.

Distributor Communications During the 2021 Enforcement Period:

During the 2021 wave of platform enforcement actions affecting independent artists, distributors publicly communicated in ways that did not directly address artist concerns regarding removals, appeals, or financial accountability.

The following examples reflect public-facing messaging from DistroKid during this period.

Executive Commentary on Creative Value:

Public statements from leadership at Spotify have contributed to broader discussion around how creative work is valued within streaming ecosystems.

In multiple instances, commentary framing music as “content” and minimizing the cost of creation has drawn criticism from artists and industry observers.

Notably, these enforcement actions were applied to a catalog of formally registered works with clear authorship and documentation, demonstrating that existing systems do not reliably distinguish between legitimate creators and suspected fraudulent activity.

This raises broader concerns about how enforcement is applied across the streaming ecosystem, particularly when actions are taken without transparent standards, consistent verification processes, or meaningful opportunities for review.

As it stands, these systems place the burden of risk on artists rather than ensuring accountability within the platforms themselves.

1,012

The Decision Makers

Supporter Voices

Petition Updates

Share this petition

Petition created on May 31, 2025