Demand for Immediate Release of OpenAI's Deep Research System Card

Demand for Immediate Release of OpenAI's Deep Research System Card

The Issue

Why This Matters

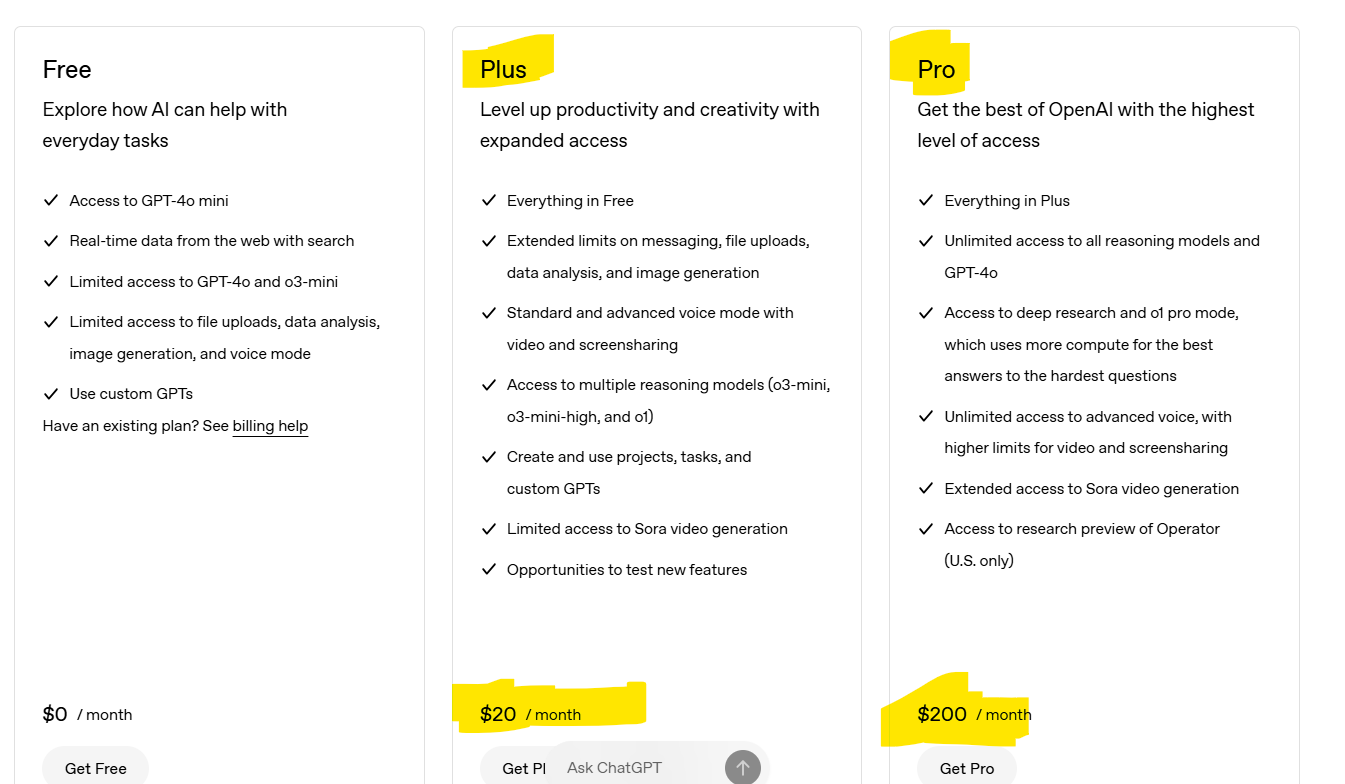

OpenAI has launched "Deep Research" - a powerful new agentic capability that conducts multi-step research on the internet for complex tasks. While this technology offers tremendous benefits, it also presents significant risks that require transparency and oversight.

Our Concern

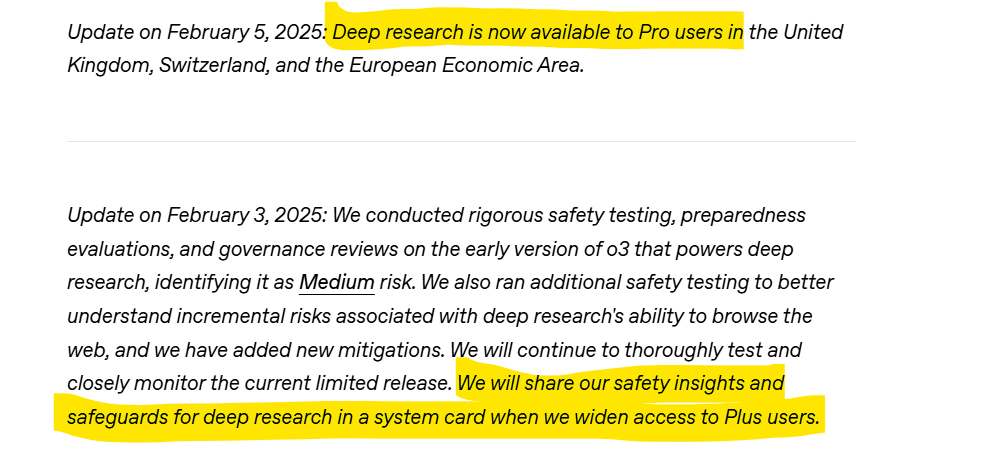

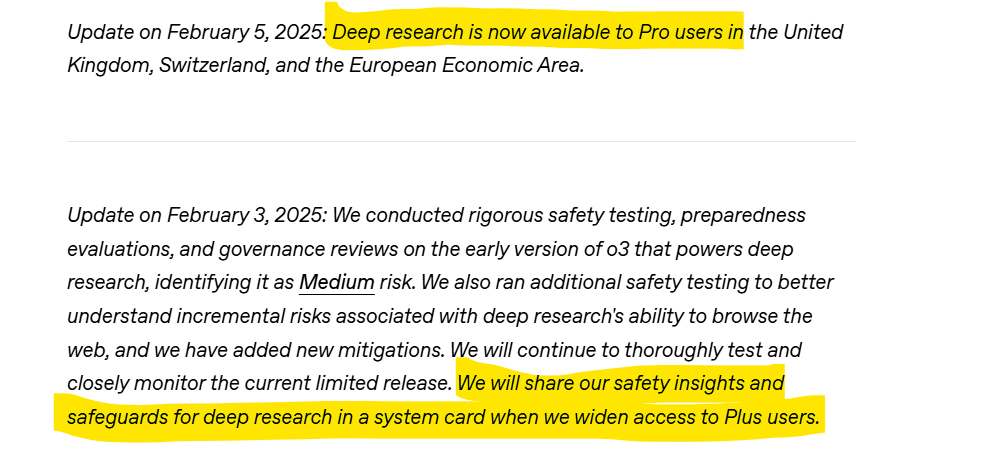

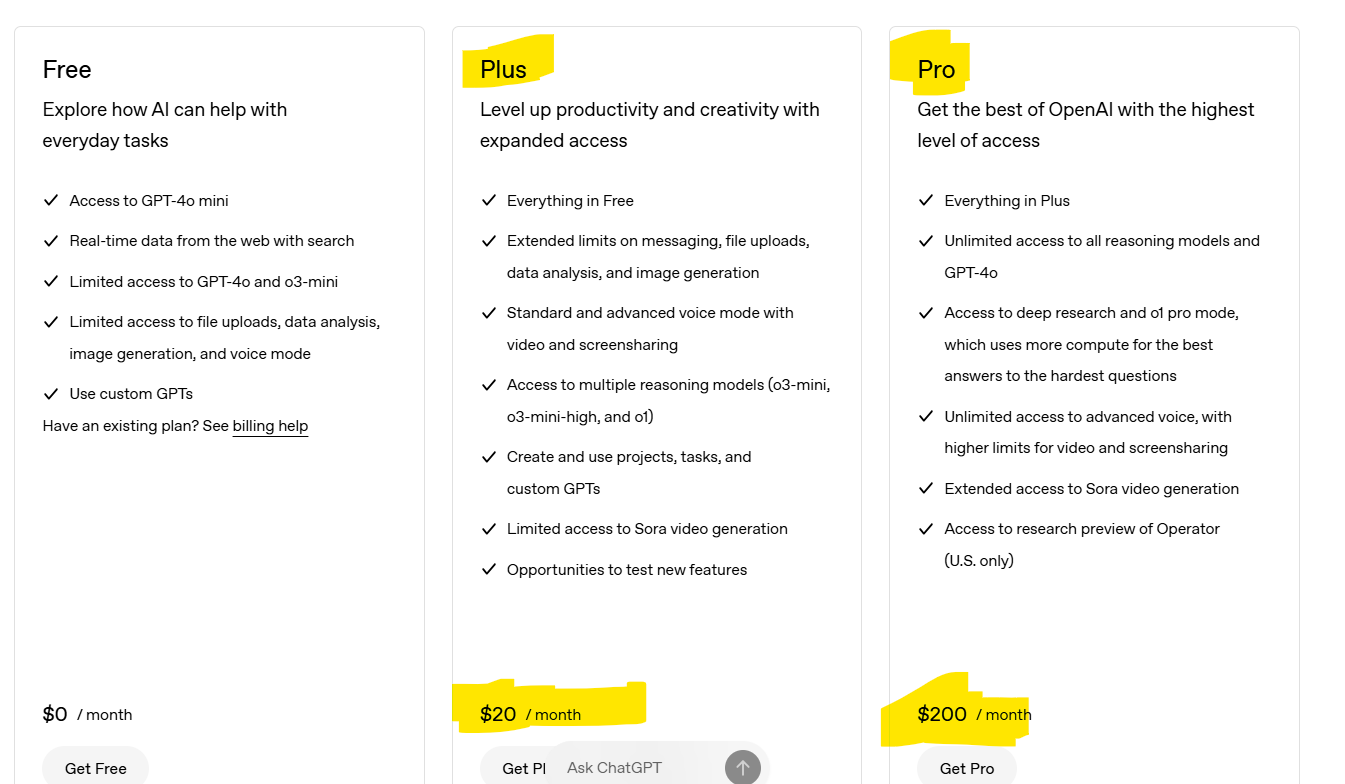

OpenAI has only committed to releasing a system card for Deep Research when they widen access to Plus users, after already deploying it to Pro users in the UK, Switzerland, and the EEA.

This approach raises serious questions:

Is it ASSUMED there are NO RISKS from Pro users in these regions?

- Why delay crucial safety documentation when the technology is already deployed to Pro users?

- Is this delay motivated by financial interests rather than safety considerations?

The Risks Are Real

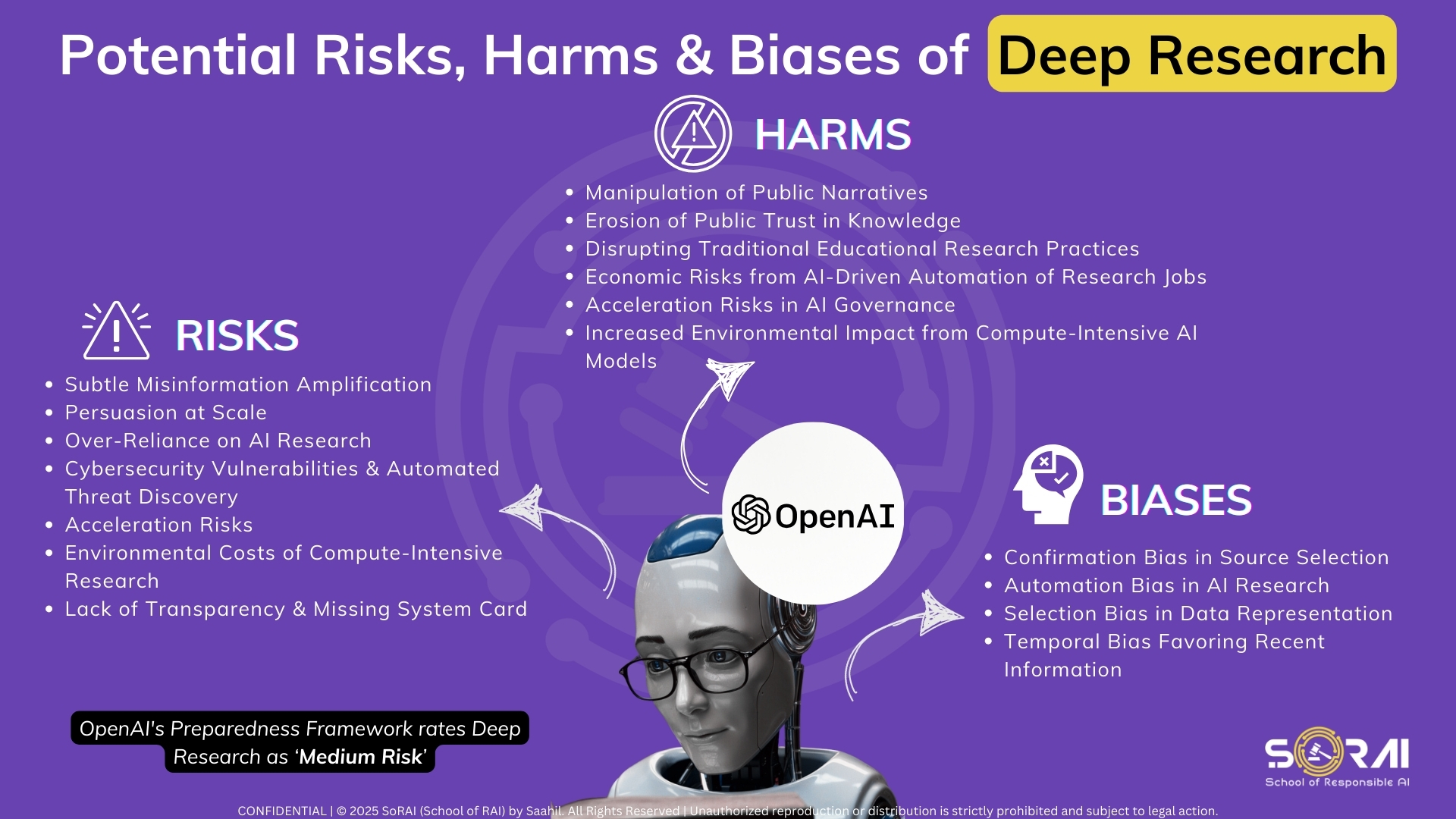

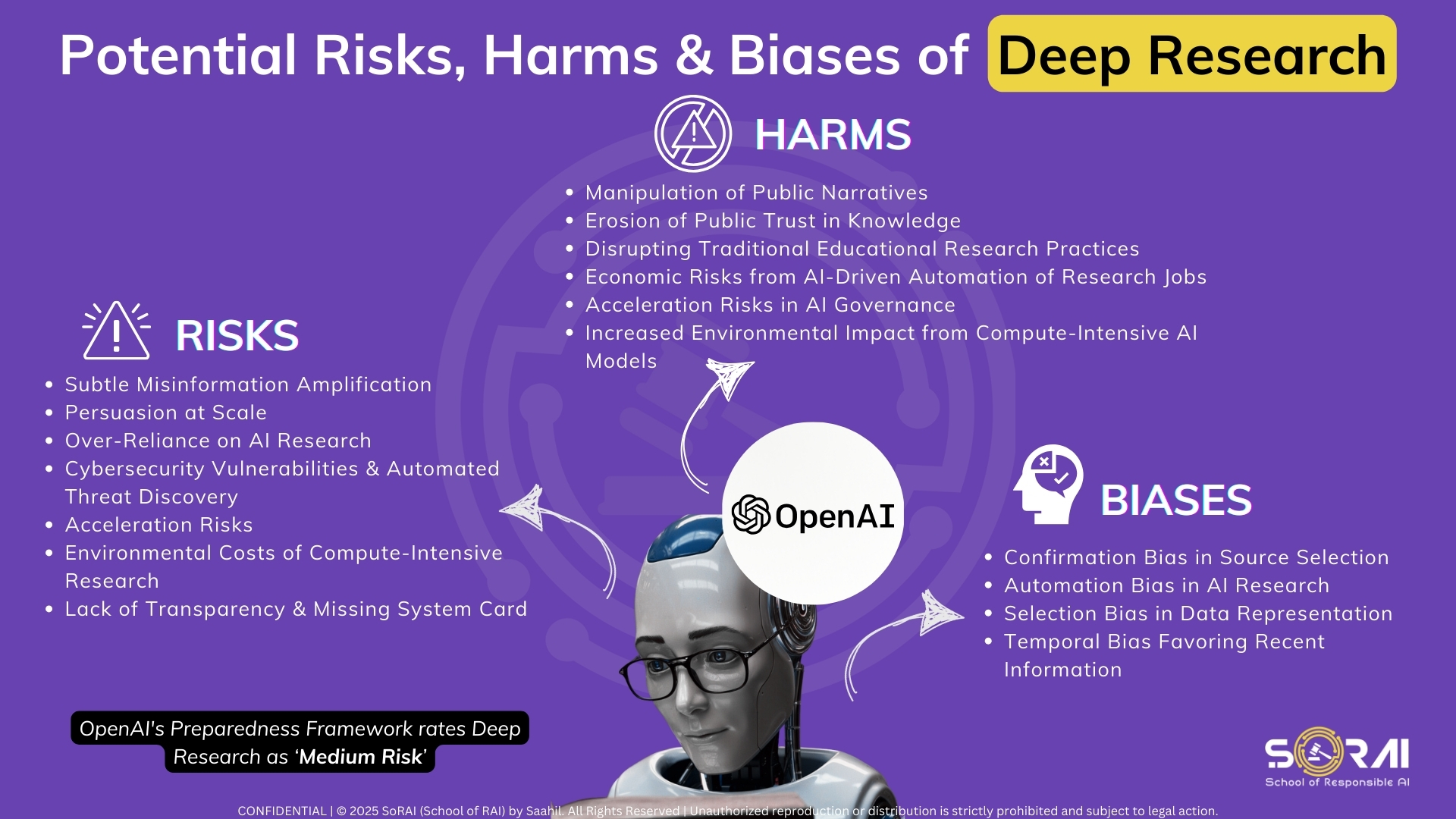

According to available information, Deep Research has been classified as "Medium Risk" on OpenAI's own Preparedness Framework. Independent analysis highlights five concerning risks:

- Subtle Misinformation Amplification: Deep Research may amplify biased or incorrect information through its automated synthesis process.

- Cybersecurity Vulnerabilities: The automated research capabilities could be exploited for reconnaissance, vulnerability discovery, or phishing research.

- Persuasion at Scale: The technology could be weaponized for mass persuasion through seemingly neutral but subtly biased research reports.

- Over-Reliance on AI Research: Users may place excessive trust in AI-synthesized research without verification.

- Risk Escalation: Today's "Medium Risk" could quickly become "High Risk" as capabilities evolve.

Our Demand

We, the undersigned, call on OpenAI to:

- Immediately release the System Card for Deep Research to all users, not just when access is widened to Plus users.

- Provide transparent risk assessments for each user tier (Pro, Plus, Team, etc.).

- Implement additional safeguards commensurate with the identified risks.

- Establish a continuous monitoring and a transparent reporting mechanism to track and address emerging risks.

Why Sign This Petition

By signing this petition, you're advocating for responsible AI development that prioritizes safety and transparency over RUSHED DEPLOYMENT. All users deserve to understand the capabilities and limitations of the tools they use, particularly those with significant potential for harm.

Join us in demanding accountability from one of the world's influential AI companies.

The Issue

Why This Matters

OpenAI has launched "Deep Research" - a powerful new agentic capability that conducts multi-step research on the internet for complex tasks. While this technology offers tremendous benefits, it also presents significant risks that require transparency and oversight.

Our Concern

OpenAI has only committed to releasing a system card for Deep Research when they widen access to Plus users, after already deploying it to Pro users in the UK, Switzerland, and the EEA.

This approach raises serious questions:

Is it ASSUMED there are NO RISKS from Pro users in these regions?

- Why delay crucial safety documentation when the technology is already deployed to Pro users?

- Is this delay motivated by financial interests rather than safety considerations?

The Risks Are Real

According to available information, Deep Research has been classified as "Medium Risk" on OpenAI's own Preparedness Framework. Independent analysis highlights five concerning risks:

- Subtle Misinformation Amplification: Deep Research may amplify biased or incorrect information through its automated synthesis process.

- Cybersecurity Vulnerabilities: The automated research capabilities could be exploited for reconnaissance, vulnerability discovery, or phishing research.

- Persuasion at Scale: The technology could be weaponized for mass persuasion through seemingly neutral but subtly biased research reports.

- Over-Reliance on AI Research: Users may place excessive trust in AI-synthesized research without verification.

- Risk Escalation: Today's "Medium Risk" could quickly become "High Risk" as capabilities evolve.

Our Demand

We, the undersigned, call on OpenAI to:

- Immediately release the System Card for Deep Research to all users, not just when access is widened to Plus users.

- Provide transparent risk assessments for each user tier (Pro, Plus, Team, etc.).

- Implement additional safeguards commensurate with the identified risks.

- Establish a continuous monitoring and a transparent reporting mechanism to track and address emerging risks.

Why Sign This Petition

By signing this petition, you're advocating for responsible AI development that prioritizes safety and transparency over RUSHED DEPLOYMENT. All users deserve to understand the capabilities and limitations of the tools they use, particularly those with significant potential for harm.

Join us in demanding accountability from one of the world's influential AI companies.

Victory

Share this petition

Petition Updates

Share this petition

Petition created on 18 February 2025